The Push: May 9th, 2026

Desktop agents, shared AI memory, and a local brain for your meetings, inbox, and messy recurring work

UI Tars Desktop: AI That Clicks Through Chaos

github.com/bytedance/UI-TARS-desktop | License: Apache-2.0

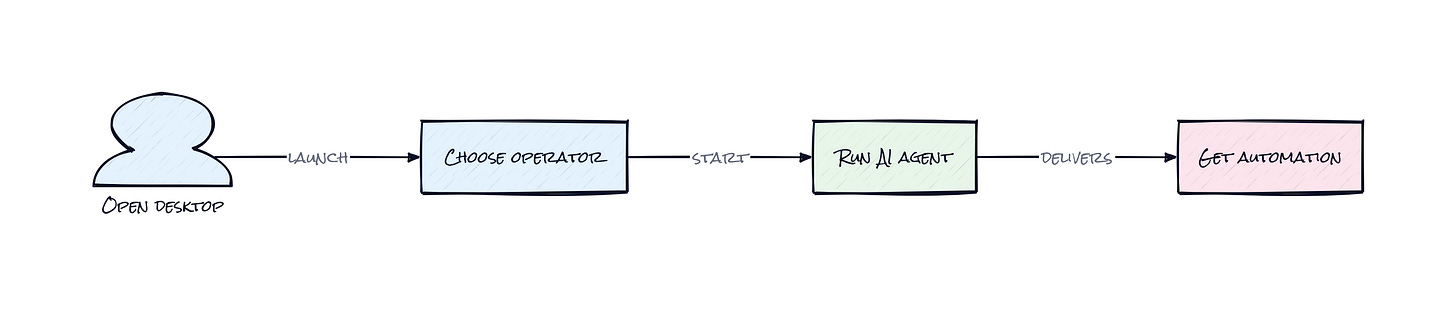

Booking a flight, filing expenses, pulling numbers from some cursed internal dashboard, half of modern work still happens by clicking around interfaces built for humans, not APIs. That gap is where UI Tars Desktop gets interesting. Instead of waiting for every app to expose clean integrations, this repo gives an AI eyes and hands on a real screen. Honestly, that matters more than another chatbot wrapper. Software already runs the world, but a shocking amount of it remains visually operated, brittle, and disconnected. This project treats the GUI itself as the integration layer.

The Drop: Where API Dreams Meet Ugly Reality

Zapier-style automation looks clean in demos because the apps involved already speak structured data. Real workflows do not. Finance tools hide actions behind modal stacks. Airline sites reshuffle buttons mid-checkout. Internal enterprise software was clearly designed by committee and then abandoned. Even browser automation breaks when the page changes enough that selectors stop matching.

UI Tars Desktop exists because the last mile of automation is still painfully manual. A lot of valuable work lives inside interfaces that were never meant to be programmable, or were technically programmable but in such limited ways that the useful steps still require a person staring at the screen. That is the frustration: the intelligence exists, the intent exists, but execution keeps collapsing into brittle scripts or outsourced clicking.

ByteDance is betting that computer use and browser operators are the more practical bridge. Instead of demanding every service expose a pristine API, the agent works with what already exists: pixels, layouts, buttons, forms, tabs. That makes the scope wider and messier, but also way more realistic. The interesting gap here is not AI reasoning in the abstract. It is operational access to the software people already use.

The Stack: Electron With Agent Plumbing

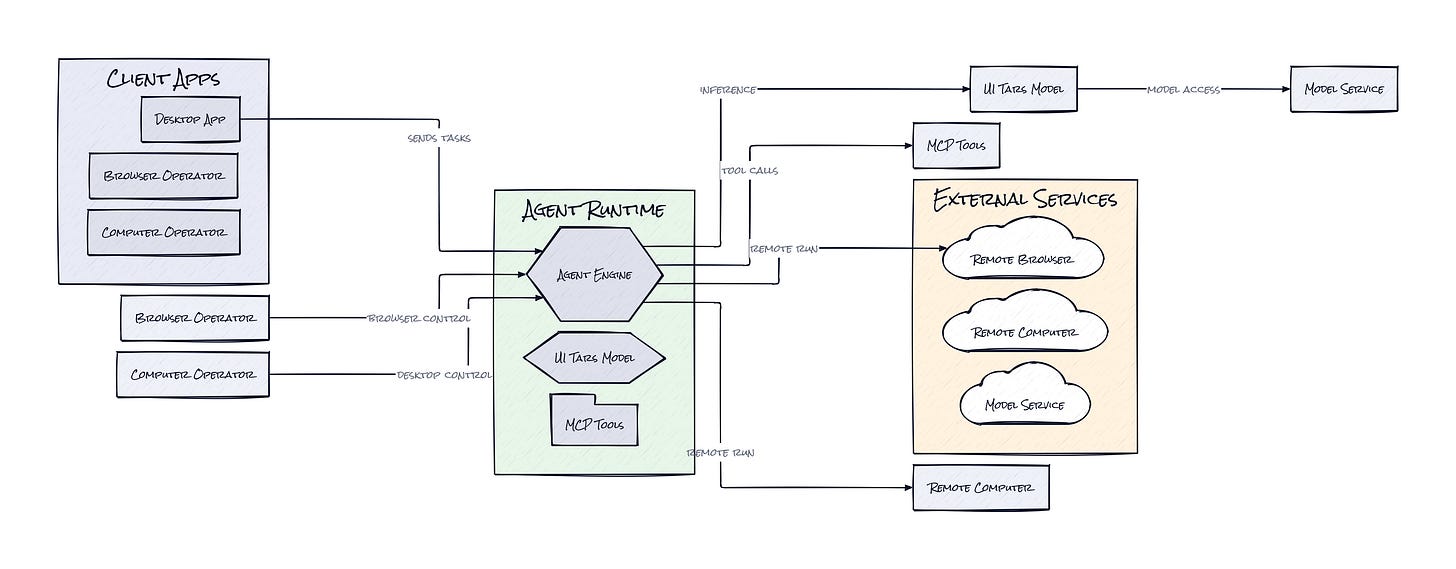

Under the hood, UI Tars Desktop is a TypeScript-heavy Electron app with a monorepo structure, plus Playwright-style browser control, desktop input orchestration, and model-provider hooks for multimodal reasoning. Agent TARS, MCP integration, and a small telemetry layer called UTIO connect the front end, tool layer, and model back end into one operator stack.

The Sauce: Vision Is Not Enough, Control Loops Matter

Plenty of AI demos can identify a button on a screenshot. Fewer can turn that perception into a reliable action system that survives real-world mess. UI Tars Desktop gets interesting because it is built as an operator architecture, not just a vision model wrapped in a chat box.

At the center is a loop that binds screen observation, action planning, and execution into a persistent runtime. The agent does not merely caption what is on screen. It keeps state across a task, chooses whether to act locally or remotely, and can operate either a full computer session or a browser session depending on the job. That distinction matters. Browser tasks benefit from web-native affordances, while desktop tasks need broad permissions and direct input control. Combining both in one stack gives the system range without pretending every workflow belongs in a tab.

Another smart choice is the repo’s Remote Computer Operator and Remote Browser Operator model. That turns the machine being controlled into a service boundary, not just a local app. In practice, that means safer isolation, easier demos, and a path to shared agent infrastructure where tasks run somewhere else but remain inspectable from your device. Add Event Stream support on the Agent TARS side, and the stack starts to look less like a single app and more like an observability-friendly execution layer for GUI agents. That is far more useful than a flashy one-off automation. The architecture is saying: agent actions should be streamable, debuggable, and portable across environments.

The Move: Turn Repetitive Ops Into Agent Territory

Operations teams, growth teams, support leads, and founders drowning in admin can use UI Tars Desktop as a wedge into workflows that are too messy for standard automation. Start with high-friction tasks that already have clear success conditions, e.g. pulling weekly metrics from a dashboard, checking competitor pricing across a handful of sites, testing onboarding flows, or completing repetitive back-office steps in legacy tools.

Strategically, the win is not just time saved per task. The win is getting automation into systems where no one wants to fund a formal integration project. That makes this repo useful inside startups with duct-taped internal processes and inside larger companies stuck with old enterprise software. A PM could prototype an agent-run QA flow. An ops lead could create a repeatable browser-based runbook. A founder could pressure-test whether a task is worth turning into a product at all.

Because the stack supports local and remote operation, teams can also separate experimentation from production. Test on a sandboxed remote machine, watch the execution behavior, then decide whether the workflow deserves deeper tooling. That is a very practical way to validate automation before sinking engineering time into custom software.

The Aura: Software Stops Asking for So Much Patience

People have been trained to tolerate bad interfaces because the alternative was doing nothing. Click through this. Re-enter that. Open the same dashboard again because someone forgot export access. UI Tars Desktop chips away at that learned helplessness.

What changes here is expectation. If an AI can operate software the way a competent assistant would, users stop accepting the idea that every digital task deserves full human attention. That does not mean interfaces disappear. It means attention becomes the scarce resource, and clicking becomes optional. The deeper thesis feels simple: once agents can act across screens, software quality is judged less by whether humans can navigate it and more by whether machines can complete outcomes through it.

The Play: Owning the Messy Automation Layer

This looks like a better mousetrap in a very large existing market, but with a shot at 0-to-1 distribution because GUI-native automation reaches software that APIs never touched. TAM is huge, spanning enterprise automation, QA, customer operations, and consumer task execution. The repo already shows early PMF signals: 31,205 stars, strong community energy, and a product surface broad enough to attract both hobbyists and infrastructure builders.

Moat is not raw model access, that will commoditize. The sticky part is execution telemetry, workflow tuning, and trust in long-running operator loops across desktop and browser contexts. If teams start building business processes around observable GUI agents, switching costs rise fast because the hard part is not the prompt, it is the operational layer.

Winners:

AtoB: Faster back-office automation for fleet payments compounds because internal ops teams can patch over broken vendor workflows without waiting for formal integrations.

Mercor: Lower-cost candidate and recruiter operations improve as agent-run browser tasks absorb scheduling, sourcing, and CRM upkeep across fragmented hiring tools.

Intuit: Broader reach into small-business workflows strengthens if GUI agents can work across the messy long tail of third-party finance software that never exposed decent APIs.

Losers:

Parcha: Narrow compliance workflow products get squeezed when horizontal GUI agents can handle semi-structured reviewer tasks without bespoke product surfaces.

Klarna: Labor-arbitrage-heavy service ops lose edge if merchants can automate support and back-office clicks directly on top of existing software.

SAP: Interface complexity becomes a bigger liability when buyers realize agents can sit on top of legacy systems, reducing the urgency to buy native workflow add-ons.

tl;dr

UI Tars Desktop turns a multimodal model into a real desktop and browser operator, not just a screenshot commentator. The clever bit is the execution architecture: local and remote control, observable action streams, and one stack for both GUI and browser tasks. Ops teams, PMs, and automation-minded founders should look.

Stars: 31,208 | Language: TypeScript