The Push: May 8th, 2026

Routing tricks, stealth Chromium, and a modular playbook for getting more from AI coding

9Router: AI Usage Arbitrage, Productized

github.com/decolua/9router | License: MIT

Claude Code billing feels cute right up until a long debugging session eats quota, rate limits hit mid-task, and the “AI pair programmer” suddenly turns into a dead dashboard. That pain gets worse when teams juggle Cursor, Copilot, Codex, and random provider credits across half a dozen accounts. 9Router lands right in that mess. Instead of treating model access like a single subscription, it treats it like traffic management: route every request to the cheapest, available, still-good-enough model, and keep the coding session alive.

The Drop: When AI Coding Hits Its Budget Ceiling

Plenty of AI coding products sell a smooth front end. Fewer deal with the ugly operational truth: coding agents are token furnaces, especially when tool calls start dumping logs, diffs, grep output, and file listings back into context. A simple “fix this bug” flow can quietly turn into dozens of expensive round trips. Then come the other annoyances, quota resets that waste paid capacity, provider outages, OAuth tokens expiring, and the constant manual switching between endpoints when one model gets flaky.

9Router exists because this is no longer a power-user edge case. It is the default experience for anyone trying to rely on AI coding seriously. The repo’s thesis is blunt: model access should behave like infrastructure, not like a fragile SaaS seat. That means every request should have a backup path, every subscription should be squeezed before reset, and every verbose tool response should be compressed before it burns more budget. Honestly, the frustration here is less “AI is expensive” and more “AI access is badly orchestrated.”

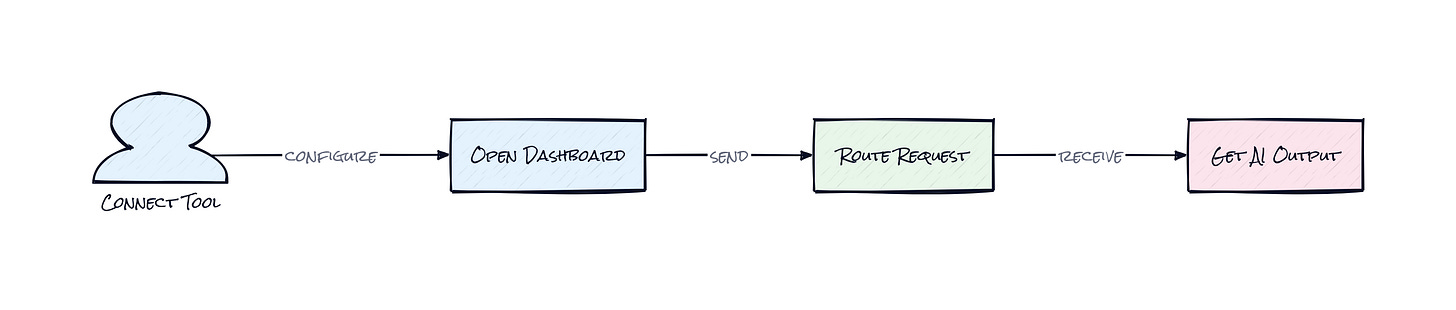

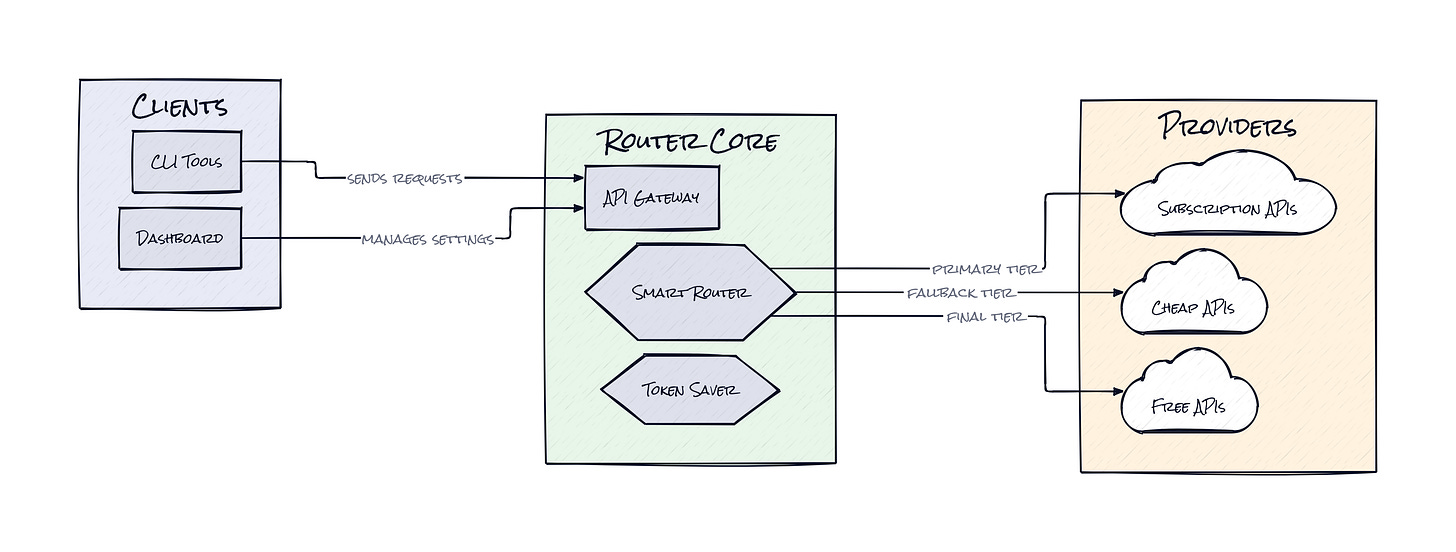

The Stack: A Local Gateway With a Translation Brain

Under the hood, 9Router is primarily JavaScript, with a local server and dashboard built around Next.js plus a routing layer that exposes an OpenAI-compatible API to coding tools. A modular executor system handles provider-specific behavior, while streaming handlers, OAuth services, and lightweight local storage keep sessions, quotas, and failover logic coordinated in one place.

The Sauce: Compatibility Is Table Stakes, Routing Logic Is the Product

What stands out is the combination of RTK Token Saver, auto-fallback, and the executor-based provider layer. RTK is the repo’s compression trick for tool output, especially bulky `tool_result` content that coding agents constantly generate. That matters because coding workflows are weirdly wasteful: the expensive part often is not the model’s reasoning, it is the agent shoveling giant chunks of terminal output back into context. Trimming that overhead by 20 to 40 percent changes the economics fast.

Beneath that, 9Router behaves like a smart broker for model traffic. The local endpoint makes Claude Code, Codex, Copilot, Cursor, and similar tools believe they are talking to one normal API. Behind the curtain, the router translates formats across providers, tracks quota state, rotates across multiple accounts, refreshes auth when needed, and demotes requests across pricing tiers, subscription first, then cheap models, then free ones. That architecture is clever because it turns fragmented AI access into a single reliability surface.

Another subtle win: the executor pattern. Each provider gets its own adapter for auth quirks, request formatting, and streaming behavior, while the outer interface stays stable. Think Stripe’s payment abstraction, but for chaotic model endpoints. That makes 9Router less of a one-off proxy and more of a compatibility layer with policy built in. The interesting part is not that it connects to 40-plus providers. It is that the repo treats model routing as an optimization problem with cost, availability, and context size all in play at once.

The Move: Turn AI Spend Into a Routing Problem

Founders, students, and small product teams can use 9Router as a control plane for AI coding without buying fully into one vendor’s pricing model. Point Claude Code, Codex, or Copilot at the local endpoint, connect a mix of paid and free providers, and define a practical traffic strategy: premium models for hard reasoning, lower-cost models for repetitive edits, free capacity as overflow. That setup gives a team continuity first, savings second.

Another strong use case is experimentation. Instead of changing behavior every time a subscription caps out, 9Router lets a team keep the same workflow while swapping the back-end economics. That is strategically useful because habits compound. Once people trust that coding sessions will not randomly die, AI usage goes up, and the organization gets clearer signal on where premium inference actually matters. For anyone managing budgets, this repo also creates a new lens: stop asking “Which model should be bought?” and start asking “Which requests deserve the expensive route?”

The Aura: Reliability Changes Behavior Before Intelligence Does

Developers, PMs, and founders do not need perfect AI to change workflow. They need dependable AI. The human unlock here is psychological: when model access stops feeling scarce, people stop hoarding prompts and start using AI continuously, e.g. for tiny refactors, quick debugging passes, and exploratory code edits that would have felt too wasteful before. That shifts AI from occasional assistant to ambient utility. Scarcity trained people to ration. Routing teaches them to expect uptime.

The Play: Infrastructure Arbitrage Hiding in Plain Sight

This looks less like a pure 0-to-1 category and more like a sharp wedge into the emerging AI gateway market, except the wedge is unusually strong because it targets coding workflows where token burn, latency sensitivity, and failure intolerance are all high. TAM is broader than “developer tools” in the narrow sense. Any company standardizing AI-assisted software work eventually needs policy, routing, usage optimization, and provider abstraction. The repo already shows early PMF signals: 5,336 stars in a short window, broad tool compatibility, multilingual docs, and community-generated tutorials. Moat is not raw IP. Moat could become switching costs through configuration gravity, usage data, and fastest execution across messy provider surfaces.

Winners:

DoubleZero: Lower AI coding infrastructure costs make lean engineering teams more productive earlier, which compounds into faster product iteration without matching headcount growth.

Anysphere competitor PearAI: Better access to cheap and fallback inference reduces dependency on premium model margins, improving gross margin and giving more room to compete on UX.

Anthropic: Increased routing makes premium models easier to reserve for high-value moments, which can expand paid usage rather than shrink it.

Losers:

Melty: Endpoint commoditization erodes any thin differentiation built on wrapping model access, and adaptation is hard when routing becomes expected plumbing.

Cursor competitor Windsurf: Bundled inference becomes less defensible when users can bring their own optimized back-end path and avoid getting trapped in one pricing stack.

Copilot: Flat subscription logic looks weaker when power users can squeeze more uptime and lower effective cost from an external router.

tl;dr

9Router turns AI coding access into a local routing layer that squeezes token waste, swaps between providers, and keeps sessions alive when quotas or rate limits hit. The clever bit is the policy engine around compatibility, not just the proxy. Worth a look for anyone relying on AI coding daily and tired of paying retail for unreliable access.

Stars: 5,336 | Language: JavaScript