The Push: May 6th, 2026

Portable agent workflows, a private research memory, and a truly independent browser stack worth keeping an eye on

Agent Skills: Prompting Was Never the Point

github.com/addyosmani/agent-skills | License: MIT

Watching an AI coding tool build software can feel weirdly familiar. It nails the obvious parts, then skips the boring senior-engineer habits that actually keep products alive, no spec, no test discipline, no rollback plan, just confident motion. Agent Skills exists for that exact failure mode. Instead of treating coding agents like magical interns, it gives them a repeatable operating system for how solid engineering work gets done. Honestly, that framing matters more than whatever model happens to be trendy this month.

The Drop: The Senior Engineer Habits Bottleneck

Plenty of AI coding demos look impressive right until the second or third change request. A feature gets generated fast, but the invisible scaffolding is missing: product definition, task breakdown, interface boundaries, quality checks, deployment caution. Human teams know these rituals are annoying, but they also know why they exist. They prevent expensive messes later.

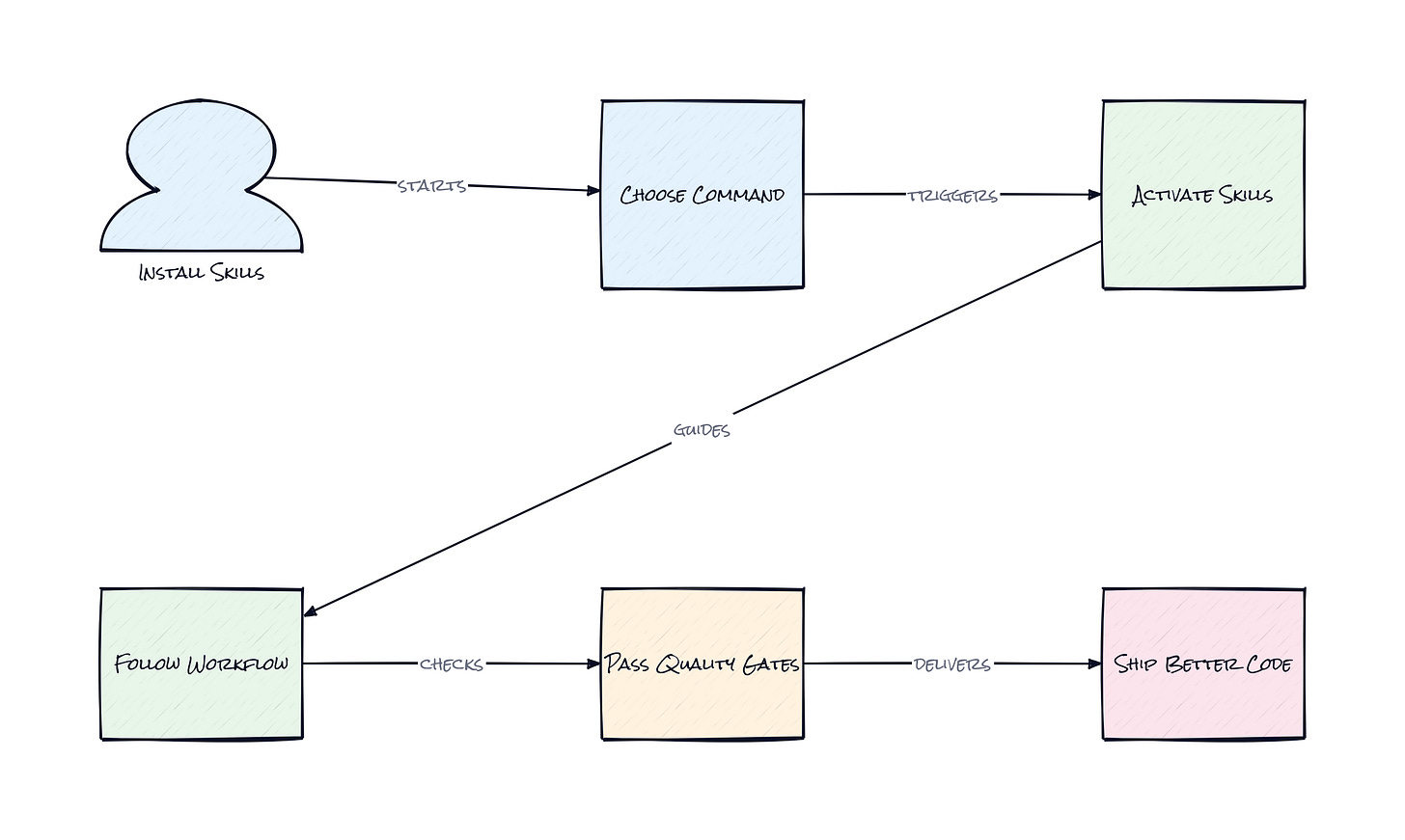

Agent Skills comes from that gap between code generation and production judgment. The repo packages engineering workflows into reusable instructions that coding agents can follow across the full lifecycle, from idea refinement to launch. That means the agent is not just asked to write code, but to enter a structured sequence: define the goal, split the work, build in slices, verify behavior, review quality, then ship safely.

What makes the pain real is consistency. A talented engineer can remember to do all that. An AI agent usually does whatever the current prompt over-rewards. Without guardrails, every session starts from scratch, and every output reflects the user's prompt quality more than the team's standards. That is the frustration this repo is attacking.

The Stack: Markdown as Control Plane

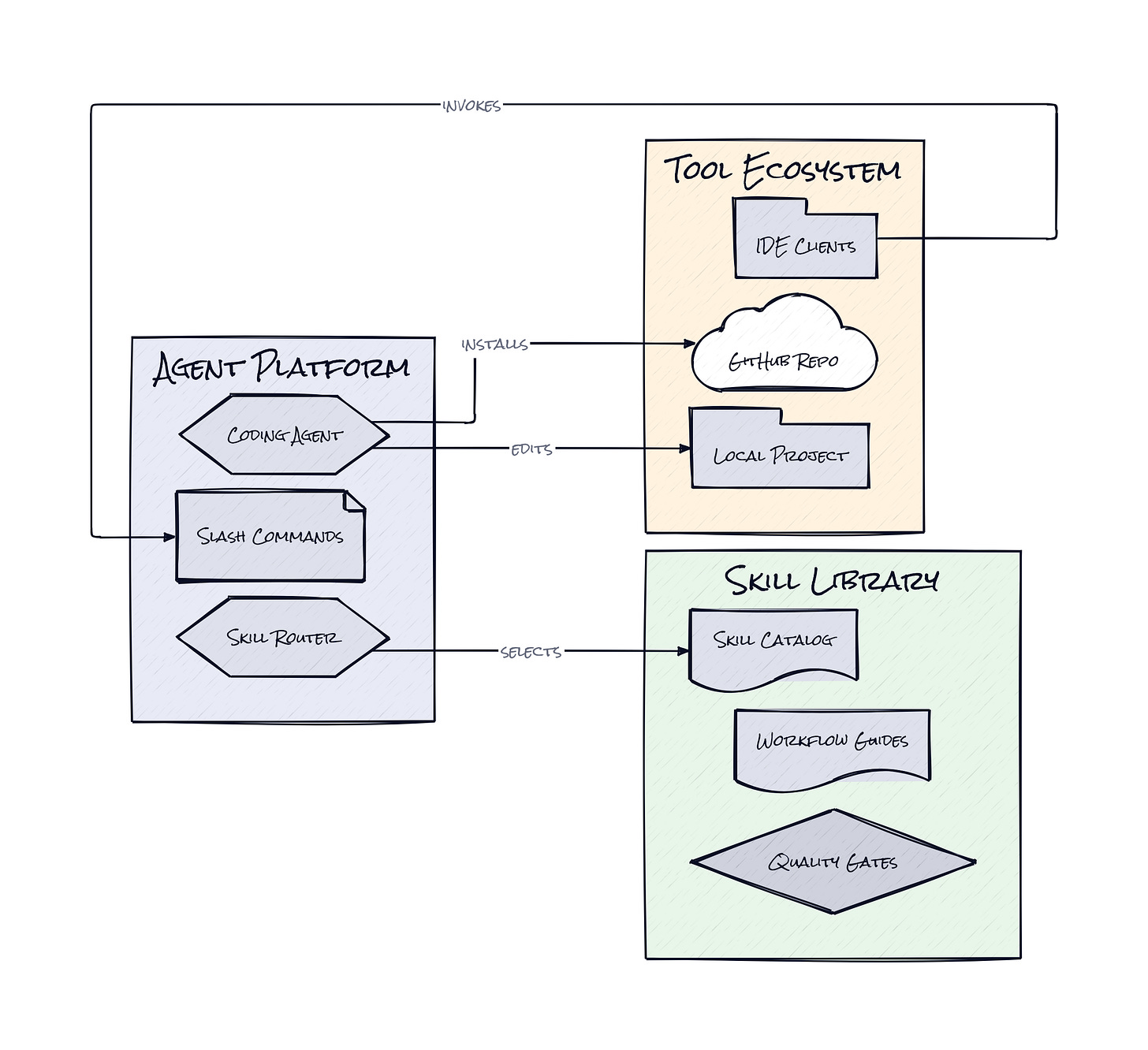

Under the hood, Agent Skills is surprisingly plain, and that is part of the appeal. The repo is powered mostly by Markdown-native skills, plus Shell scripts and lightweight config for tools like Claude Code, Gemini CLI, Copilot, Windsurf, and Cursor-style rules systems. Slash commands act as entry points, while the same workflow logic gets reused across different agent surfaces.

The Sauce: Workflow Packaging Beats Prompt Craft

Seven lifecycle commands, like /spec, /plan, /build, /test, /review, /code-simplify, and /ship, are the architectural center here. Each command is not just a shortcut, it is a trigger for a bundle of opinionated behaviors that map to a real engineering phase. That sounds simple, but it solves a nasty coordination problem in AI coding: agents are good at local execution and bad at preserving process across time.

Agent Skills treats process as modular infrastructure. Beneath those commands sit around twenty structured skills, each encoded as a reusable workflow with steps, verification gates, and anti-rationalization checks. That last part is especially smart. The repo assumes agents will try to explain away missing evidence, skipped tests, or fuzzy requirements, because language models are built to keep going. So the design inserts explicit friction where human engineers usually slow down and ask, "prove this."

There is also a subtle plugin strategy. The repo is not tied to one IDE or one model vendor. Skill portability matters because the real asset is the behavioral layer, not the chat window. A team can carry the same operating discipline across Claude Code, Gemini CLI, Copilot, or any agent that accepts instruction files. That makes Agent Skills feel less like a prompt library and more like open-source middleware for AI development behavior. The interesting part is not the commands themselves. It is the idea that engineering judgment can be serialized, versioned, and distributed.

The Move: Turn Standards Into Distribution

Founders, PMs, and technical operators can use Agent Skills as a way to reduce variance across AI-assisted product work. The obvious move is dropping it into an existing coding agent setup so every build request starts from a spec and ends with review and ship checks. That alone cuts down on the classic AI failure mode where speed creates hidden cleanup debt.

Another angle is organizational. A startup with one strong technical lead can encode that person's standards into the team's default agent behavior. Instead of repeating the same review comments, architecture rules, and launch cautions across every project, those norms become reusable instructions. That compounds. New hires ramp faster, contractors operate with clearer boundaries, and prototype work has a better chance of surviving contact with production.

Consultancies and agencies should notice this too. Packaging a house engineering method into portable agent workflows turns internal taste into a productized advantage. The repo also hints at a broader strategy: if AI tools are becoming the interface to software creation, the winners may be the teams that own the process layer sitting on top of the models, not just access to the models themselves.

The Aura: Competence Becomes Configurable

Junior builders are about to expect senior-level scaffolding by default. That is the deeper behavioral change here. Instead of learning process only through painful mistakes or code review trauma, people can inherit a structured way of working from day one.

Agent Skills nudges software creation toward a world where discipline is embedded in the tool, not remembered by the most tired person on the team. That changes confidence. It also changes trust. If an AI agent can reliably show specs, tests, review logic, and rollout thinking, the conversation shifts from "can this write code?" to "can this operate responsibly?" That is a much bigger threshold.

The Play: Open-Source Process Becomes a Product Layer

This looks less like a 0-to-1 category creation and more like a sharp wedge into a fast-growing control layer for AI-assisted software development. TAM is broad because every company experimenting with coding agents has the same issue: output quality is volatile, and volatility kills trust. Agent Skills shows early PMF signals through strong stars velocity, broad tool compatibility, and obvious community remix potential.

The moat is not raw code. It is distribution, encoded best practices, and eventual switching costs once teams bake these workflows into daily delivery habits. If process becomes the sticky layer above commodity models, CAC can stay low through open-source adoption while LTV expands via team rollout, governance, analytics, and enterprise policy controls.

Winners:

Graphite: Review workflows get stronger as AI-generated code volume rises, and tighter feedback loops compound into defensible team habits.

Codeium: Enterprise coding adoption gets easier when buyers can wrap agents in visible guardrails, increasing seat expansion and reducing trust friction.

ServiceNow: Internal developer platforms become more valuable when AI output can be routed through standardized approval and release processes.

Losers:

Fine: Autonomous coding positioning gets weaker if buyers increasingly demand explicit process controls over end-to-end agent independence.

Linear: Planning gravity softens when more specification, breakdown, and verification work happens inside agent-native workflows instead of standalone project tools.

Cisco: Generic enterprise tooling loses influence if software teams prefer lightweight, open, configurable process layers over heavyweight top-down systems.

tl;dr

Agent Skills turns senior engineering habits into portable workflows for AI coding agents. The clever part is not better prompts, it is packaging specs, planning, testing, review, and shipping as reusable behavioral infrastructure. Teams experimenting with Claude Code, Copilot, or Gemini-style agents should pay attention.

Stars: 29,825 | Language: Shell