The Push: May 11th, 2026

Smarter code habits, AI content ops, and a desktop memory layer that actually remembers your work

React Doctor: AI React Needs Guardrails

github.com/millionco/react-doctor | License: MIT

A pull request lands, the demo still works, and the code somehow feels off. State is bouncing through effects, components are doing too much, accessibility got quietly worse, and the AI assistant that wrote half the diff seems very proud of itself. That is the new frontend tax. React Doctor goes after that exact pain: not whether code compiles, but whether a React app is getting structurally worse while everyone ships faster. Honestly, that distinction matters more than another autocomplete upgrade.

The Drop: When Fast Code Starts Aging Badly

Chat-based coding tools are great at producing React that looks plausible in screenshots and passes basic checks. Trouble starts a week later. A feature that should have been a simple state update now rerenders too often, data fetching lives in the wrong place, and tiny anti-patterns stack into a codebase nobody wants to touch. Traditional linting catches syntax-y mistakes and some style issues, but it often misses the higher-order stuff that makes React apps feel brittle.

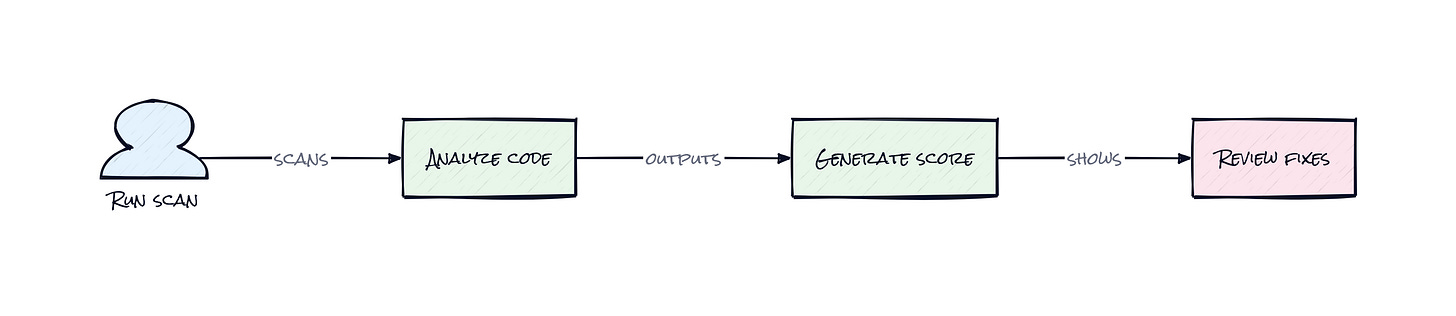

React Doctor exists because AI coding changed the failure mode. The bottleneck is no longer writing enough code. The bottleneck is keeping generated code aligned with how modern React, Next.js, Vite, and React Native apps are supposed to behave. That includes state and effects, bundle size, architecture, security, accessibility, and dead code, all wrapped into a health score that turns messy qualitative judgment into a number teams can track.

Another frustration sits underneath this: code review does not scale when every assistant can generate huge diffs. React Doctor makes that flood more legible. Instead of arguing about vibes in a PR, teams get diagnostics, thresholds, and a clearer signal on whether shipping faster is also making the product worse.

The Stack: A Linter With Opinions

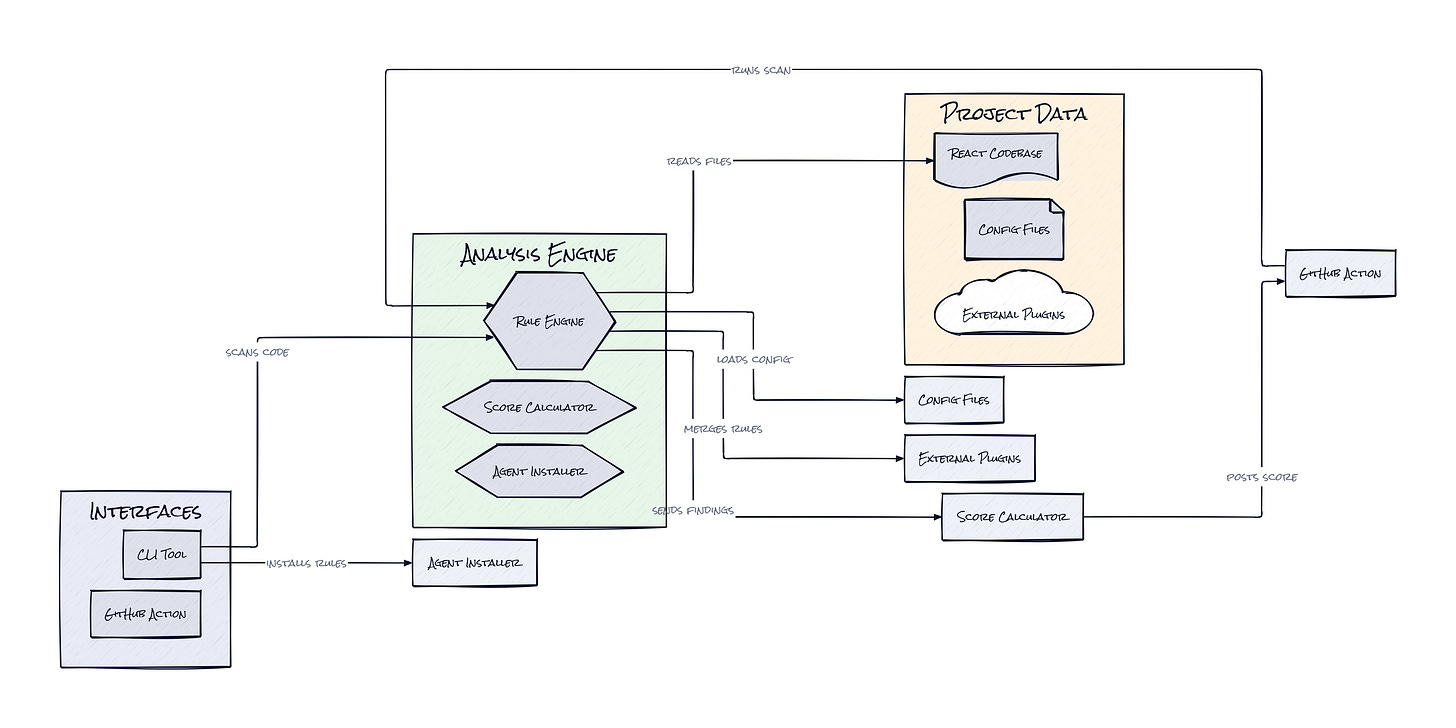

Under the hood, React Doctor is a TypeScript project built around a CLI scanner, plus companion plugins for ESLint and Oxlint. It also folds in dead-code detection and framework-aware rules, then plugs into GitHub Actions and coding agents, which is why the package feels less like a single tool and more like a quality layer.

The Sauce: Scoring the Architecture, Not Just the Syntax

One architectural choice makes React Doctor stand out: it combines framework-aware static analysis with a single diagnostic score that can be used by humans, CI, and agents at the same time. That sounds simple, but it is a smart packaging move. Plenty of tools can emit warnings. Fewer can turn those warnings into something a team can baseline, enforce in pull requests, and hand back to an AI assistant as feedback.

Several details make that work. React Doctor adapts rules based on the project context, e.g. Next.js versus React Native, detected React setup, existing lint configuration, ignored files, even optional companion plugins. That means the scanner is not pretending every React app should follow one universal checklist. It builds a context-sensitive model of what “healthy” means for this codebase, then reports findings in a format that works for local scans, diff-only checks, machine-readable JSON, or GitHub annotations.

The more interesting layer is the agent integration. The repo includes agent install flows and skills, effectively letting teams push React best practices upstream into the assistant before bad patterns hit the codebase. That is clever because the product is not just a reviewer. It is becoming a policy distribution system for AI coding. Think Notion templates, but for frontend architecture rules. Add the explain mode, which tells you why a rule fired or why a suppression failed, and the whole thing starts to look less like linting and more like an operating system for React code quality.

The Move: Turn PR Quality Into a Managed Metric

Founders and product teams should read this less as a dev utility and more as a control system. The obvious use case is dropping React Doctor into CI, setting a fail threshold, and posting comments on pull requests. That immediately gives every frontend repo a shared quality bar, especially when AI-generated diffs start getting larger than any reviewer wants to read carefully.

Smarter usage is strategic. Run scans on the main branch to establish a baseline, then use diff mode for new work so teams are not blocked by old debt. Feed the score into dashboards next to velocity metrics. Install the agent skills so Cursor, Claude Code, or Codex starts producing cleaner React in the first place. For agencies and internal platform teams, React Doctor can become a standard across multiple client apps, which quietly reduces review time and onboarding friction.

There is also a brand angle. If a startup ships consumer product surfaces in React, code quality is product quality. Faster interactions, fewer regressions, cleaner accessibility, smaller bundles, less frontend entropy. React Doctor gives teams a way to defend those outcomes with evidence, not just senior engineer intuition.

The Aura: Taste Becomes Systematized

Code review used to be where engineering taste lived. A senior person would say, “this works, but don’t structure it like that,” and the lesson spread slowly. AI coding breaks that social loop because code appears faster than taste can be transferred. React Doctor turns those unwritten norms into something explicit, repeatable, and machine-readable.

That changes expectations. Teams start assuming software should not just ship quickly, but stay coherent under speed. The human-level shift is subtle: less trust in raw output, more trust in systems that preserve quality under pressure. That seems like the real story.

The Play: Quality Gates for the AI Frontend Boom

From a VC lens, React Doctor is not pure 0-to-1 category creation, but it does look like a sharp wedge into an expanding market: AI-native code quality. TAM is bigger than linting budgets suggest because the buyer is really paying to control review overhead, regressions, and frontend entropy across every React team using AI assistance. PMF signals look solid for a young repo, star velocity is fast, the problem statement is instantly legible, and distribution is built into developer workflows through CLI, CI, and agent installs.

Moat is not classic data network effects, at least not yet. The nearer-term moat is execution speed, opinionated rule design, and workflow embedding. If React Doctor becomes the default quality layer between coding agents and production React apps, switching costs rise because teams start building process, thresholds, and trust around the score.

Winners:

Magic Patterns: Higher demand for AI app scaffolding compounds if teams can add quality controls that keep generated frontend code from decaying after day one.

Cursor: Better output quality and fewer embarrassing React anti-patterns improve retention because assistants feel less like junior interns and more like reliable collaborators.

GitHub: More CI-native code health workflows strengthen Actions and pull request gravity, which keeps quality tooling anchored inside the existing developer surface.

Losers:

Lovable: More scrutiny on generated React erodes the appeal of shipping fast-but-messy UI code, and adapting is hard when speed is the core promise.

Snyk: Narrower positioning around security misses the broader code health budget if teams start buying unified quality signals instead of point solutions.

Atlassian: Manual review rituals lose some of their grip when architecture feedback becomes automated inside PRs, reducing the need for process-heavy coordination around frontend quality.

tl;dr

React Doctor turns React code quality into a score, a policy layer, and a feedback loop for both humans and coding agents. The smart part is the context-aware analysis plus agent integration, which catches bad patterns before they become team-wide habits. Frontend-heavy startups and AI-assisted product teams should pay attention.

Stars: 7,876 | Language: TypeScript