The Push: May 10th, 2026

Browser-native 3D editing, public trading agents, and a Mac that moonlights as a local AI server

SuperSplat: 3D Capture Needs an Editor

github.com/playcanvas/supersplat | License: MIT

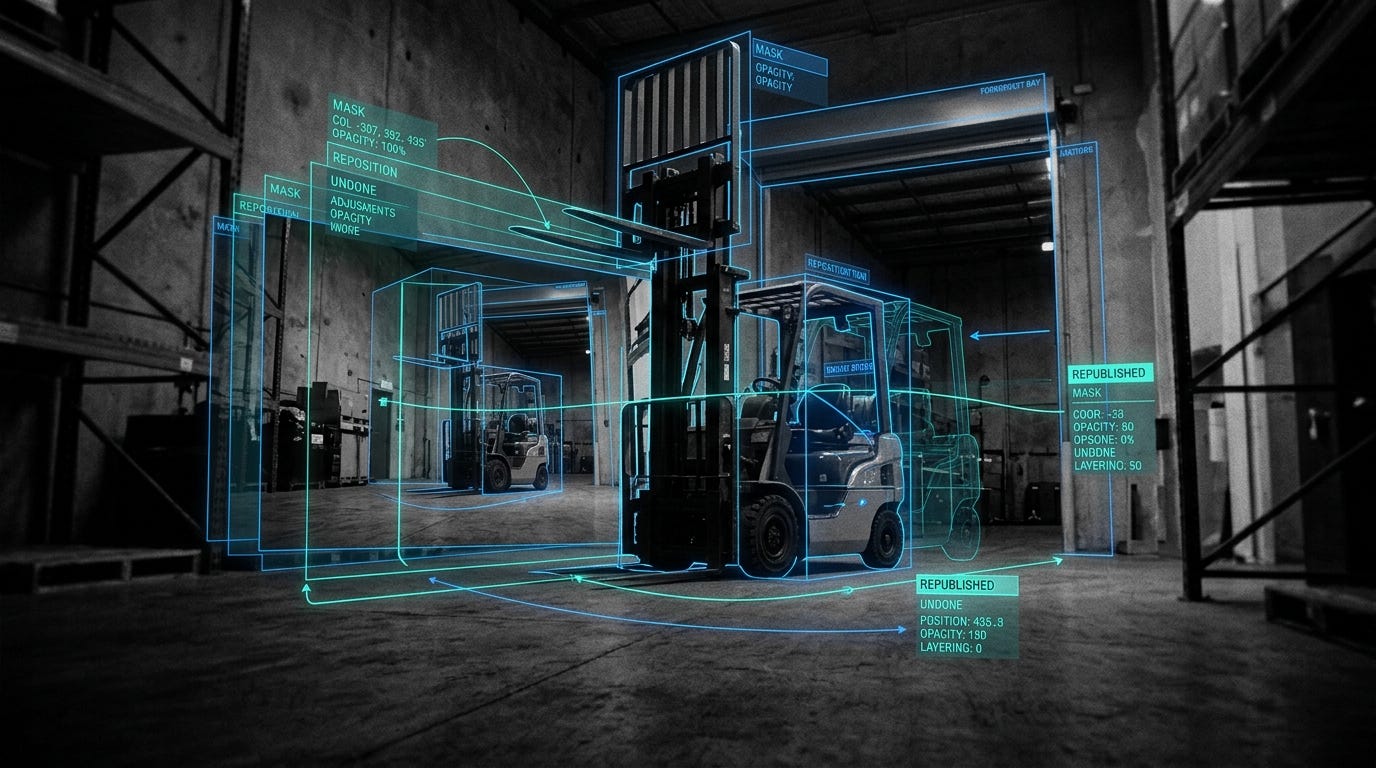

Phone cameras can already turn a room, storefront, or product into a dense Gaussian splat scene, which is basically a photoreal 3D capture made from millions of soft points instead of hard polygons. The catch comes one minute later. Raw captures are messy, huge, and full of junk, e.g. passersby, ceiling noise, or half a chair. SuperSplat matters because it treats this new media format like something worth editing, not just viewing. That sounds obvious, but honestly this is where a lot of “future of 3D” tooling still falls apart.

The Drop: Raw 3D Is Still a Mess

Photogrammetry had Blender. Video had Premiere. 3D Gaussian splatting has mostly had demos, research repos, and export buttons that dump a file on your desk and leave you alone with the consequences.

SuperSplat exists because generated 3D captures are not production-ready. A museum scan includes tourists. A retail scene includes reflections and floating artifacts. A real estate walkthrough carries dead space and giant file sizes. Teams want the wow effect of photoreal 3D, but the cleanup step still feels like a lab exercise. That gap kills adoption faster than rendering quality does.

What makes the frustration sharper is that splats are not intuitive to edit with old-school 3D tools. Mesh editors assume surfaces, topology, and geometry that behave like objects. Splats behave more like volumetric clouds with color and opacity, which means selection, trimming, masking, and optimization need different interaction patterns. SuperSplat goes after that exact pain, turning a novel rendering format into something closer to a usable creative workflow. That is the unlock, not another viewer tab.

The Stack: Browser-Native 3D Workbench

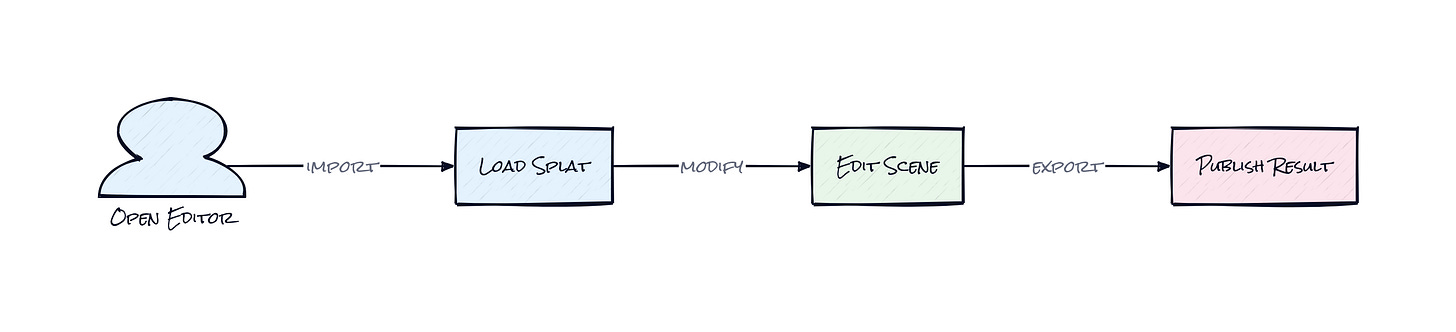

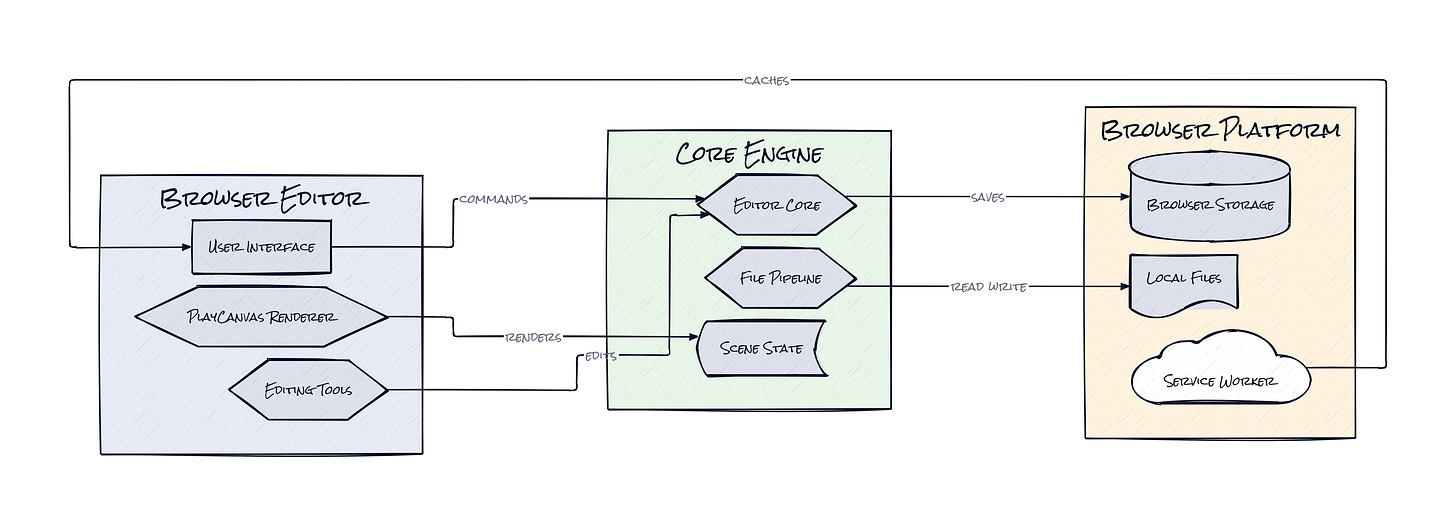

Under the hood, SuperSplat is built in TypeScript on top of PlayCanvas, with a custom editing UI, browser storage support, and rendering paths that tap WebGL and WebGPU depending on capability. File IO, serialization, compression, and publishing are all handled in-browser, which keeps the whole tool lightweight, portable, and very online in the best way.

The Sauce: Editing Splats Like They’re First-Class Media

Browser delivery is nice, but the architecture choice that actually matters is SuperSplat’s decision to model splat editing as a full scene system, not a thin layer on top of a renderer. That shows up everywhere: Scene State tracks the evolving composition, Edit Ops package changes as reversible actions, and selection tools cover brush, lasso, polygon, flood, box, and sphere modes because splat data needs spatial editing, not just object picking.

That sounds like normal editor plumbing, but it is exactly what the category needed. Gaussian splats are awkward because the unit of work is neither a traditional mesh nor a clean object hierarchy. SuperSplat handles this by treating splats as editable datasets inside a persistent scene graph with transform handlers, pivots, animation tracks, and serialization logic. In plain English, the project gives this weird new media format the ergonomics of a real design tool.

Another smart move is that cleanup and publishing live in the same product surface. The repo includes optimization and output machinery, plus an iframe API for embedding the editor into other apps. That means SuperSplat is not only an end-user tool, it is also infrastructure for any company building capture, commerce, mapping, or immersive web workflows. Honestly, the interesting part is not the renderer. It is the fact that PlayCanvas built the missing middle layer between “capture happened” and “something polished can ship.”

The Move: Turn Capture Into a Workflow

Brands, agencies, and product teams can use SuperSplat as the cleanup station between raw capture and customer-facing experience. A furniture company can scan a showroom, strip out visual noise, compress the scene, and publish an interactive walkthrough. A marketplace can turn creator-submitted captures into standardized 3D assets. An event team can preserve a venue in photoreal detail, then trim, annotate, and embed it on the web without asking users to install anything.

Because the tool runs in the browser, the strategic edge is speed of iteration. Internal teams can review the same scene, make selective edits, and push a lighter final version into a website, demo flow, or sales experience. The embedded API also hints at a stronger move: wrap SuperSplat inside a vertical product. Real estate platforms, digital twin startups, and spatial commerce apps can avoid building a custom editor from scratch, then spend their energy on distribution and workflow integration instead. That saves years, not weeks.

The Aura: 3D Stops Being Precious

Captured spaces become a lot more useful when they stop feeling untouchable. That is the human-level thesis here. People are comfortable editing photos and videos because those formats became disposable enough to iterate on. SuperSplat pushes spatial media in that direction, where a room scan or object capture is no longer a sacred artifact from a specialist pipeline, but something a team can crop, clean, tune, and republish casually.

Once that expectation sets in, photoreal 3D gets closer to normal product behavior. Marketing teams test variants. Creators remix scenes. Commerce teams treat immersion like another asset type. Editable media always spreads faster than pristine media.

The Play: A Better Mousetrap, but a Big One

This looks less like a pure 0-to-1 category creation and more like a sharp wedge into the emerging spatial content stack. The TAM is broader than “3D artists,” spanning e-commerce, real estate, games, cultural heritage, mapping, and enterprise digital twins. PMF signals are early but real: 6,639 stars for a niche editing tool, clear community contributions, and a live browser product suggest demand is coming from actual workflow pain, not just research curiosity.

Moat is not data or network effects yet. The moat is execution speed plus product taste around a weird format that still lacks standard UX conventions. If SuperSplat becomes the default editing layer embedded across capture apps, switching costs rise fast because teams build process around it, not just files.

Winners:

Luma AI: Faster post-capture cleanup makes consumer-to-pro spatial workflows more reliable, and that compounds into higher retention for creators shipping 3D scenes repeatedly.

Matterport: Lower-friction browser editing expands what customers expect from spatial asset pipelines, which can boost upsell into richer publishing and collaboration layers.

Shopify: Cheaper photoreal 3D asset prep increases the number of merchants who can ship immersive product experiences, raising conversion potential without huge CAC.

Losers:

Scaniverse: Raw capture alone gets commoditized faster when downstream editing becomes easy elsewhere, and adaptation is hard if the workflow stops at acquisition.

Unity: Generic 3D creation environments look heavier for splat-specific cleanup, which weakens appeal for lightweight web publishing use cases.

Adobe: Premium creative tooling faces pressure when browser-native editors make niche media formats editable before the legacy suite fully absorbs them.

tl;dr

SuperSplat turns messy Gaussian splat captures into editable, publishable 3D scenes in the browser. What’s clever is the editor architecture: scene state, reversible edits, spatial selection, and publishing all sit together, so splats behave like a real media format. Worth a look for anyone building spatial commerce, digital twins, or web-native 3D tools.

Stars: 6,640 | Language: TypeScript