The Push: April 30th, 2026

Composable AI tools for browsing, video creation, and terminals that finally remember what’s going on

Browserbase Skills: AI Browsing Gets Distribution

github.com/browserbase/skills

Watching an AI assistant stall on a login screen is a pretty good reminder that “can use tools” and “can actually finish the task” are not the same thing. Clicking around the web sounds trivial until anti-bot checks, session state, CAPTCHAs, and brittle selectors show up. That gap is exactly where Browserbase Skills lands. Instead of treating browser automation like a niche dev workflow, this repo packages a whole set of actions that make Claude feel less like a chatbot with a tab open, and more like an operator that can reliably work through real websites.

The Drop: From Demo Browsering to Real Work

Plenty of AI demos can open a page, scrape some text, and call it a day. The moment the task gets even slightly real, e.g. authenticated dashboards, flaky front ends, sites with bot defenses, or workflows that span search, fetch, and browser actions, the whole thing starts to wobble. Agents lose context, fail silently, or get trapped in loops because the browser is being treated like a single tool instead of an environment with its own failure modes.

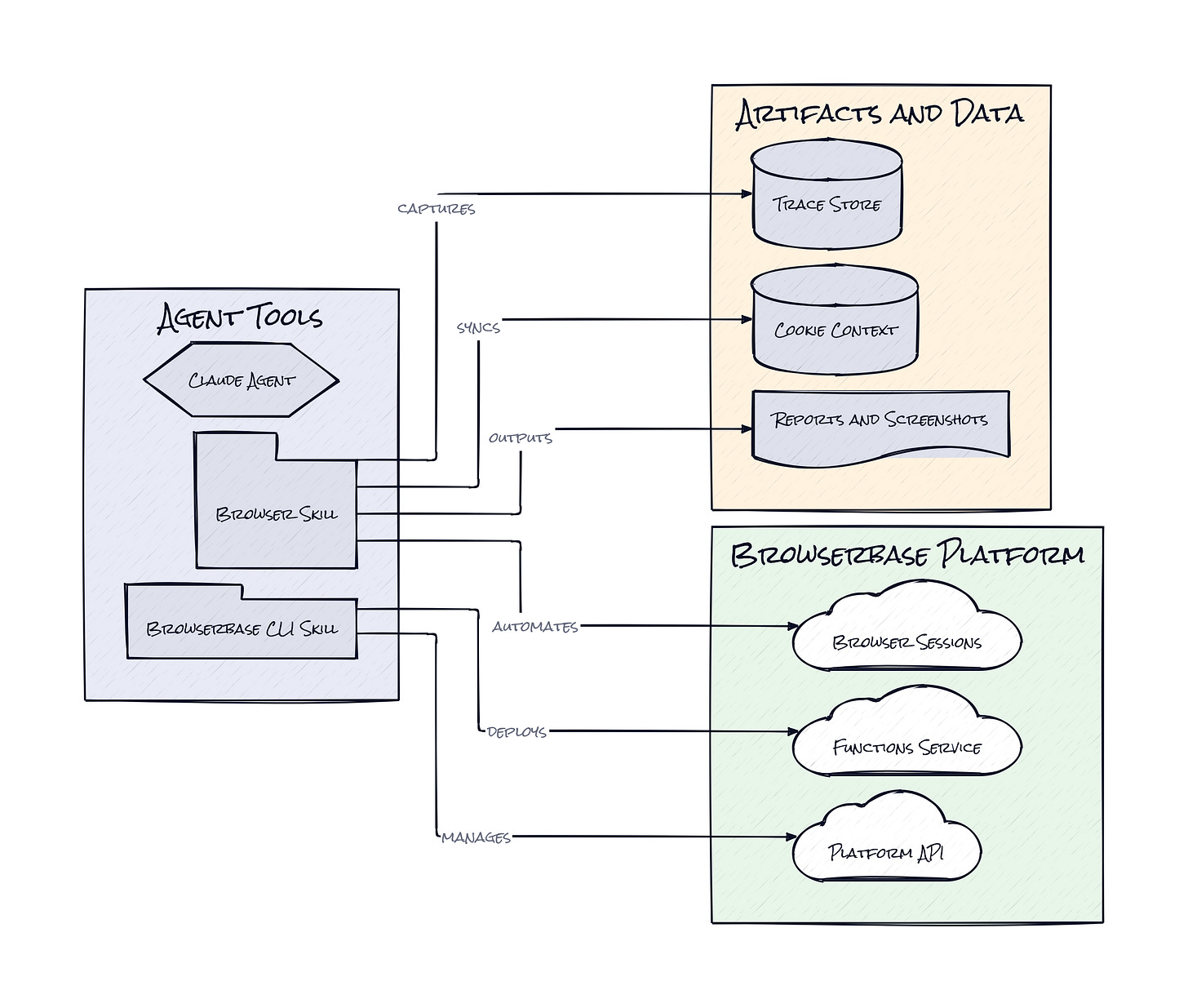

Browserbase Skills exists because that frustration is bigger than one broken click. Claude Code can write code, reason about pages, and call tools, but web work needs operational structure. This repo bundles that structure into installable capabilities, from browser for remote sessions with stealth and CAPTCHA solving, to site-debugger, which diagnoses why an automation failed and outputs a tested playbook. There’s also cookie-sync for authenticated state, browser-trace for full session capture, and ui-test for adversarial QA. Honestly, the interesting pain point is not “AI can’t browse.” It’s that web automation has always been a mess of hidden context, and agents make that mess obvious fast.

The Stack: JavaScript With Agent Plumbing

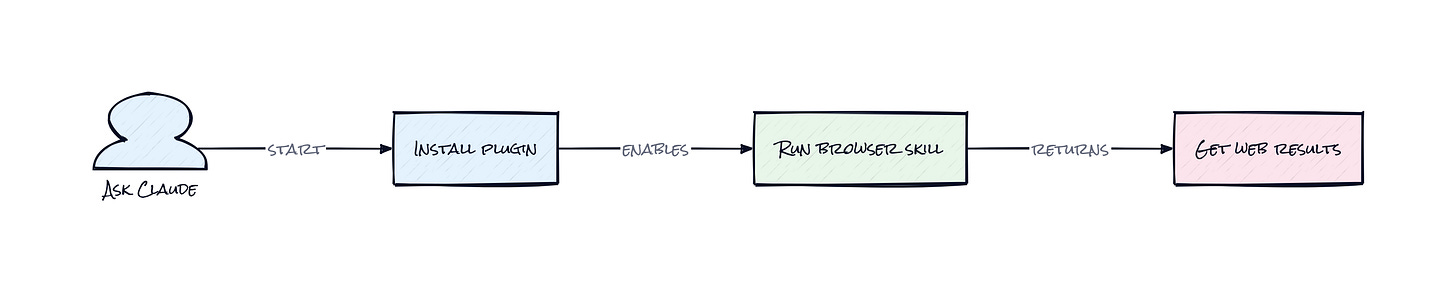

Under the hood, Browserbase Skills is a JavaScript plugin collection built around Claude Code’s skill system and Browserbase’s own tooling. The repo leans on the official bb CLI as the control plane, then layers task-specific skill packages on top, covering browser sessions, cloud functions, tracing, search, fetch, and diagnostics in a tight, modular setup.

The Sauce: Skills as an Execution Layer

What stands out here is the decision to package browsing as a skill marketplace artifact instead of a monolithic automation app. That sounds small, but it changes the architecture. Browserbase Skills is not trying to be yet another agent shell with its own memory, planner, and bespoke interface. It acts more like an execution layer that drops specialized web capabilities directly into Claude’s working environment, where the model already has context about your codebase, your task, and your intent.

That modularity matters because browser work is not one behavior. Static retrieval, authenticated browsing, session analytics, debugging, and UI testing all have different constraints. A repo that treats them as separate but composable abilities gives the model clearer affordances. fetch can grab HTML or JSON without paying the full browser tax. search can source structured results before opening a session. browser-trace captures the CDP firehose, screenshots, and DOM state, then slices that stream into searchable chunks, which is a very practical response to the usual “why did the agent fail?” black box. site-debugger then turns failure analysis into a reusable site playbook, which is the part that feels smartest.

That design creates a feedback loop: act, inspect, diagnose, retry. In product terms, this is closer to Notion plugins for agents than a single-purpose automation bot. The moat is not raw browser control. Plenty of tools can click buttons. The moat starts to form when browser actions, debugging context, and authenticated state become reusable operating primitives inside the agent’s workflow.

The Move: Turn Web Friction Into Workflow Advantage

Teams can use Browserbase Skills in a few ways that actually matter. Product and ops groups can run authenticated research flows across dashboards, partner portals, and internal tools without rebuilding every workflow as a custom integration. QA teams can point ui-test at staging environments and let the agent probe for regressions, odd edge cases, and UX breakage after changes land. Founders can have Claude inspect competitors, collect structured findings, and keep moving across pages that would normally break a simple scraper.

Another strong use case is internal acceleration. A company already using Claude Code for product work can add Browserbase Skills and suddenly move from “summarize this repo” to “log into the admin panel, verify the bug, reproduce the issue, and document the fix path.” That is a different class of usefulness. The strategic edge comes from compressing the distance between reasoning and action. Instead of handing browser work off to a separate RPA vendor, test suite, or manual ops process, the same assistant can execute, observe, and report inside one loop. That is where time gets saved, and where trust in agent workflows starts to compound.

The Aura: Software That Can Push the Button

People are getting used to AI that can answer, summarize, and draft. Expectations change once the software can also navigate the messy, authenticated, exception-filled surfaces where actual work lives. Browserbase Skills nudges behavior toward a new default: don’t just ask for advice, ask for completion.

That matters because the browser is still the universal interface for business systems. Countless workflows live behind logins, weird forms, and vendor dashboards that never had clean APIs. When an assistant can operate there with some reliability, the human role shifts upward. Less babysitting, more supervision. Less “here’s what to do,” more “come back when it’s done, and show receipts.”

The Play: Distribution Beats Novelty Here

From a VC lens, this looks less like a 0-to-1 new category and more like a sharp wedge into a very large existing TAM: browser automation, QA, AI agents, and enterprise task execution. The bet is that distribution through Claude’s ecosystem plus strong execution primitives can create PMF faster than another standalone browser agent app with expensive CAC. At 737 stars, this is still early, but the repo already shows healthy intent density because the use cases are concrete, the install path is simple, and the skill surface maps to real jobs.

Winners:

Daytona: More demand for disposable, task-specific work environments compounds when agents also need reliable browser execution alongside code and terminal context.

Browser Use: Stronger category validation lifts adoption because more teams start treating browser-native agent workflows as standard infrastructure rather than experimental tooling.

Intuit: More opportunities open up when assistants can navigate small-business dashboards and financial portals that still rely heavily on browser-first workflows.

Losers:

Relay.app: More pressure hits lightweight automation startups when AI assistants can handle messy edge cases that brittle no-code flows struggle to encode.

Automation Anywhere: More of the RPA value stack gets commoditized as agent-driven browser execution lowers setup friction and makes smaller workflows economically viable.

Workday: More workflow gravity leaks out of rigid enterprise interfaces when users expect assistants to complete tasks across systems instead of learning every admin surface manually.

tl;dr

Browserbase Skills turns Claude into a much more capable web operator by packaging browsing, tracing, debugging, authenticated sessions, and UI testing as modular skills. What’s clever is the architecture: not one giant browser agent, but a composable execution layer. Product teams, QA leads, and AI-heavy startups should look.

Stars: 737 | Language: JavaScript