The Push: April 22nd, 2026

A creative AI studio, an agent call firewall, and the observability layer your LLM stack has been missing

Open Generative AI: Creative Suites Got Too Restrictive

github.com/Anil-matcha/Open-Generative-AI

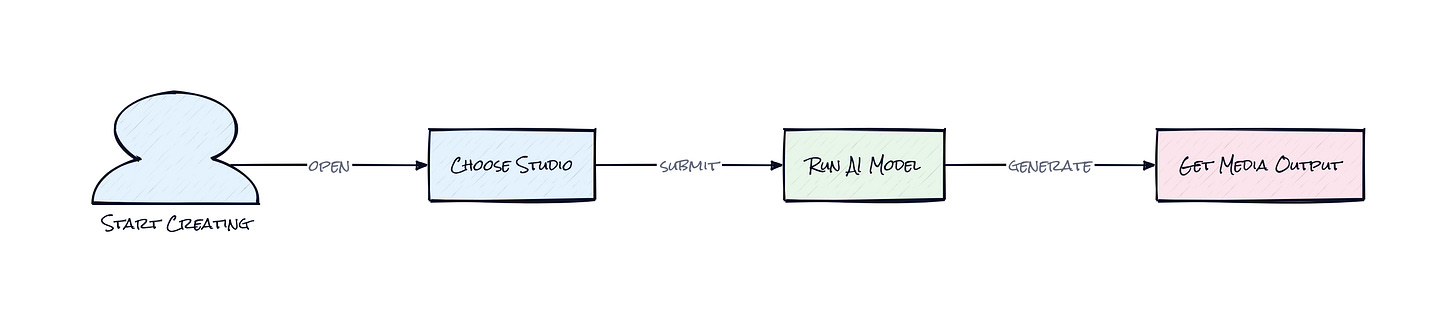

A weird thing happened to AI creativity tools. They got better at generation while getting worse at letting people actually create. You open an image app, type something ambitious, maybe slightly edgy, maybe just commercially inconvenient, and suddenly the product turns into HR software. Open Generative AI goes after that exact frustration. Not just by offering more models, but by packaging Image Studio, Video Studio, Lip Sync Studio, and Cinema Studio into one interface that feels closer to a production desk than a toy prompt box.

The Drop: When Creative Software Starts Saying No

Closed AI media tools keep making the same trade. Sleek interface, strong defaults, fast onboarding, then a wall of missing control right when the work gets serious. One app has good image models but weak video. Another nails video but locks you into subscriptions, watermarks, or content moderation rules that reject prompts for reasons that feel arbitrary. A third gives you lots of model choice but turns the experience into a spreadsheet of APIs and settings.

Open Generative AI is clearly responding to that fragmentation. The repo bundles image generation, video generation, lip sync, cinematic shot controls, and even visual workflows in a single product that you can self-host or run as a desktop app. That matters because creative iteration is rarely one model, one output, done. Real usage looks more like this: generate a concept frame, restyle it with references, animate it into video, add audio-driven motion, then rerun the whole flow with tiny changes. Existing products often make that process expensive, filtered, or annoyingly siloed.

Honestly, the pain point is not just censorship, though the repo leans hard on being unrestricted. The deeper gap is orchestration. People want one place to manage a messy, multi-model media pipeline without handing over all control to a closed platform.

The Stack: JavaScript All the Way Down

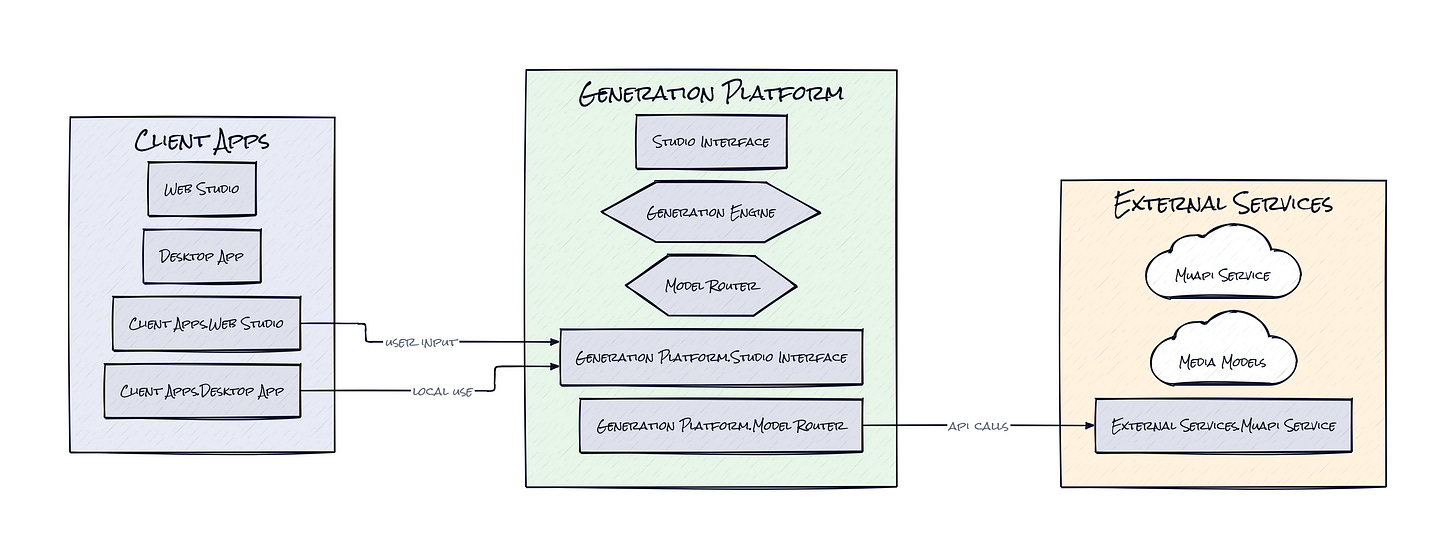

Under the hood, Open Generative AI is mostly JavaScript, with a web app built on Next.js and a desktop wrapper through Electron. The interface is split into reusable studio components, while a Muapi client handles cloud model access and a local inference layer plugs into stable-diffusion.cpp for on-device image generation.

The Sauce: One Interface, Two Compute Worlds

What makes this repo interesting is the dual execution model. Open Generative AI is not merely a front end for a giant list of hosted models, and it is not merely a local desktop toy either. The architecture treats cloud APIs and on-device inference as two interchangeable production paths inside the same creative surface.

That sounds simple, but it solves a real product problem. AI media tools usually force a binary choice. Go hosted for convenience and breadth, or go local for control and privacy. Open Generative AI keeps both in play. The browser and desktop experiences share the same studio abstractions, while the desktop app adds a built-in local generation engine that downloads model weights, manages the inference binary, and runs jobs on your machine. Meanwhile, hosted generation covers the broader catalog of 200-plus image, video, and lip sync models.

That design decision changes the product from “AI generator app” into a routing layer for creative compute. The interface adapts controls based on model capabilities, switches modes depending on whether reference media is present, and persists assets like upload history across sessions. Then Workflow Studio sits on top as a visual pipeline builder, which is where the architecture starts to look genuinely smart. Instead of asking users to manually stitch outputs across disconnected tools, the repo turns model chaining into a native behavior.

The interesting part is not the uncensored branding. It is that Open Generative AI packages fragmented generative infrastructure into one coherent operating surface, then lets compute happen wherever it makes the most sense, cloud for breadth, local for autonomy.

The Move: Build a Media Ops Layer, Not Just Content

Teams shipping creative at volume could use Open Generative AI as an internal media lab. A startup making ads, product visuals, short-form video, or creator assets can self-host the interface, standardize prompts and workflows, and stop bouncing between five separate vendors. That cuts tool sprawl, but more importantly it creates repeatability. Once a good visual pipeline exists, e.g. concept art to animation to lip-synced variant, that flow becomes an asset the whole team can reuse.

Founders and creative leads should notice the strategic angle here. Model providers are changing constantly, prices move, and access policies change with zero warning. Open Generative AI gives a company a control plane above that churn. Swap providers, test multiple aesthetics, or move sensitive jobs on-device without retraining everyone on a new stack. That lowers switching pain and preserves institutional knowledge.

Agencies, indie studios, and AI-native marketing teams probably get the fastest payoff. The repo can become the internal place where experimentation happens before outputs hit client-facing tools. That is a meaningful advantage when speed matters, but consistency matters more.

The Aura: Expectation Creep Is the Point

Creative people are getting less tolerant of software that insists on one approved workflow. That expectation shows up fast once AI enters the process. If a tool can generate media, users start wanting choice of model, choice of modality, choice of hosting, choice of guardrails, or none at all.

Open Generative AI leans into that expectation instead of smoothing it away. The human shift here is subtle but important: creators stop behaving like subscribers inside a product and start acting like operators of a production system. That is the bigger unlock. Not just faster outputs, but a stronger sense that the machine works for the workflow, not the other way around.

The Play: Infrastructure Margin Hides in Creative UX

This looks less like a pure 0-to-1 category and more like a better mousetrap in a very large, still-fragmented TAM: AI creative tooling, prosumer media software, and lightweight production infrastructure. The PMF signal is decent, 6,286 stars for a project sitting at the intersection of image, video, and self-hosting suggests real pull, especially because forks and community chatter matter more here than polished enterprise packaging. Moat is not raw model access, that gets commoditized fast. Moat is execution speed, workflow stickiness, and becoming the default control layer teams build habits around, which improves LTV and reduces CAC through community distribution.

Winners:

Photoroom: More demand compounds for specialized image editing APIs when orchestration layers make multi-step creative pipelines easier to assemble and swap.

Runway: Stronger position emerges as teams using open creative hubs still need premium video models, and that keeps high-end generation in the budget.

Autodesk: Broader acceptance of AI-assisted production stacks expands the market for professional creative workflows that connect ideation with downstream asset pipelines.

Losers:

Krea: Differentiation erodes when interface-first AI creativity can be replicated in open tools with wider model choice and fewer platform rules.

Synthesia: Premium pricing gets harder to defend if open lip sync and video chaining cover enough of the use case for scrappier teams.

Shutterstock: Subscription value weakens as generated visual content becomes easier to produce internally, especially for marketing teams that once licensed stock by default.

tl;dr

Open Generative AI turns a pile of image, video, lip sync, and local inference models into one self-hostable creative control panel. The clever bit is the shared interface across cloud and on-device generation, which makes vendor switching and workflow reuse much easier. Worth a look for agencies, AI-native marketing teams, and founders building media-heavy products.

Stars: 6,286 | Language: JavaScript