The Push: April 18th, 2026

Local-first AI workspaces, lean GPU kernels, and agents that act like real software systems

Thunderbolt: The Anti Lock-in AI Client

github.com/thunderbird/thunderbolt | License: MPL-2.0

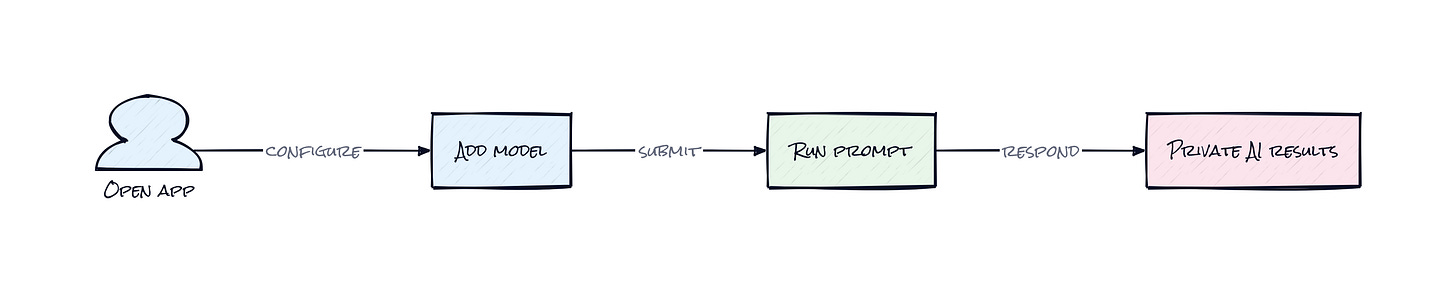

ChatGPT in the browser is convenient right up until legal asks where the data went, security asks who can audit it, and finance notices every serious workflow now depends on one vendor’s pricing mood. That tension is exactly where Thunderbolt lands. Thunderbolt is not chasing a shinier chatbot, it is trying to turn AI into infrastructure you can actually govern. Honestly, that framing matters more than the UI. The repo is early, but the bet is clear: AI clients should be portable, deployable, and controlled by the organization using them.

The Drop: Where Enterprise AI Gets Stuck

Plenty of teams want AI inside the company. Very few want the tradeoffs that come with today’s default setup. A product team wants local models for sensitive roadmap docs, support wants a frontier model for customer replies, and compliance wants every connection, identity flow, and data path documented. Suddenly the “simple” AI rollout becomes a stack of exceptions.

Thunderbolt exists because current AI clients keep forcing a false choice: polished UX with vendor dependence, or self-hosted tooling that feels like a weekend project. That gap is painfully real in regulated industries, internal knowledge work, and any company that has already been burned by SaaS sprawl. The repo’s promise, choose your models, own your data, deploy on-prem, is less about ideology and more about procurement reality.

Mozilla’s Thunderbird brand adds a useful clue here. Email became universal because clients could connect to many servers, not because one provider won everything. Thunderbolt applies that same logic to modern AI. The interesting frustration is not “people need another assistant.” It is that companies need an AI front end that does not quietly become a strategic dependency.

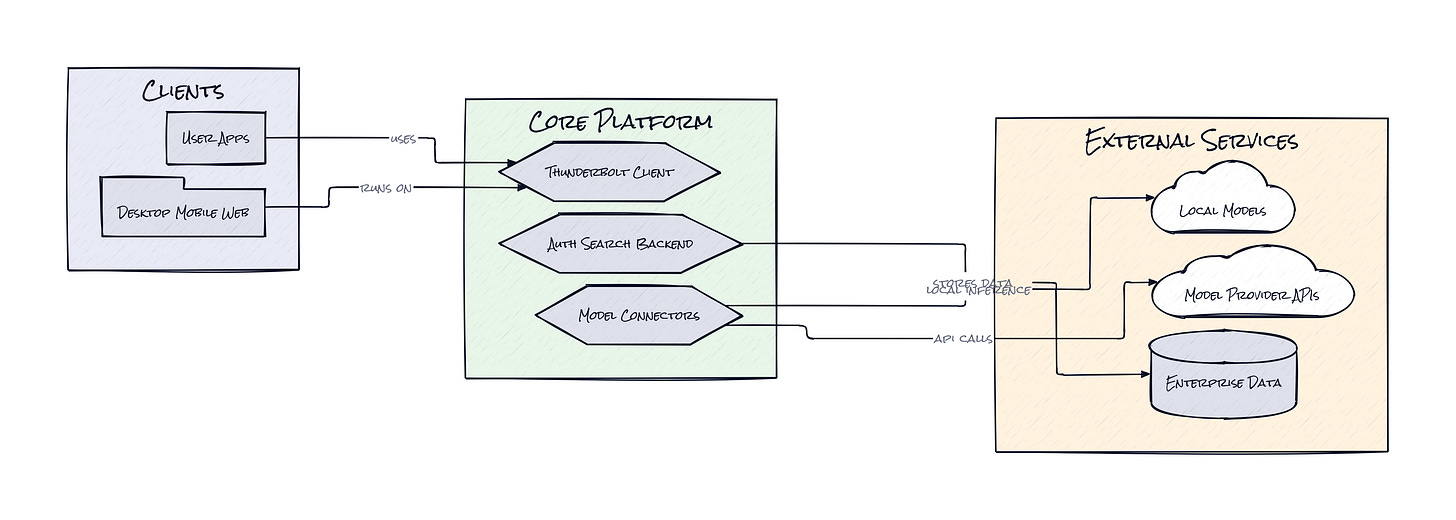

The Stack: Cross-Platform Without the Rewrite Tax

Underneath, Thunderbolt is mostly TypeScript across a React and Vite front end, with Tauri handling desktop packaging through Rust. The backend uses Bun, Drizzle, and SQLite-adjacent data tooling, while PowerSync handles synchronized local-first data across devices. That combo feels very intentional: web speed, native reach, and enterprise deployment flexibility.

The Sauce: Local-First AI, Not Just Self-Hosted AI

Buried in the architecture is the part that actually makes Thunderbolt interesting: PowerSync gives the product a synced local database model, which means the app is designed around state living on the device and then reconciling across environments, not just round-tripping every action to a central cloud. That sounds subtle. It is not.

A lot of “private AI” products are really hosted dashboards with a different billing model. Thunderbolt seems to be building a client that can run across web, mobile, and desktop while preserving a consistent local experience, then layering enterprise controls and model choice on top. The result is closer to an operating layer for AI work than a single chat pane.

That architecture matters because model choice alone is not enough. Swapping OpenAI for Ollama is easy to pitch and hard to make usable. The hard part is keeping conversation history, preferences, integrations, identity, and device state coherent across platforms without turning the app into a thin wrapper around someone else’s API. Thunderbolt’s sync layer, local storage setup, and on-prem deployment path attack that problem directly.

There is also a second smart decision here: the app treats model providers as configurable infrastructure. Frontier, local, and OpenAI-compatible endpoints can all fit into the same environment. That is much more powerful than a one-model product. It means procurement can change vendors without retraining everyone on a new interface, and teams can route different tasks to different models based on cost, latency, or data sensitivity.

The Move: Build Your Company’s AI Front Door

Instead of asking whether Thunderbolt can replace ChatGPT for everyone, the sharper move is to use it as the controlled front door for AI inside an organization. A security-conscious company could self-host the backend, connect local inference through Ollama or llama.cpp for sensitive work, then add approved external providers for tasks where quality matters more than isolation. That setup gives one interface, multiple model lanes, and a cleaner governance story.

Founders and product leaders should look at Thunderbolt as a policy surface. Who gets access to which models? Which teams can use search or external integrations? Which conversations stay fully local? Those decisions usually get buried inside scattered tools and browser tabs. Thunderbolt turns them into an application layer you can shape.

There is also a user adoption advantage. Employees rarely want “the compliant tool,” they want the tool that feels normal. Cross-platform support across desktop, mobile, and web helps here because behavior sticks when the workflow follows people everywhere. That is the strategic angle: not just reducing lock-in, but owning the default interface through which the company interacts with AI.

The Aura: Control Becomes a Product Feature

Enterprise buyers are starting to expect AI the way they expect email or file storage, as something that should fit into existing rules, not blow them up. Thunderbolt taps into that expectation. People want systems that remember context, travel across devices, and respect boundaries without demanding trust in a black box.

What changes at a human level is subtle but important. AI stops feeling like an off-platform excursion and starts feeling like part of the company’s actual operating environment. That makes usage more routine, less performative, and probably more durable. Private by design is not just a compliance line anymore, it is becoming a usability standard.

The Play: Owning the AI Client Layer

This looks less like a 0-to-1 category creation and more like a sharp wedge into a very large existing TAM: enterprise productivity software, AI copilots, and secure collaboration tooling. The upside comes from becoming the neutral AI client layer, a position with strong expansion potential if model orchestration, policy management, and synced context become sticky. PMF is still early, 1,348 stars is not breakout velocity yet, but the Mozilla-adjacent credibility, on-prem focus, and clear community interest suggest a real buyer pain point.

Moat is not raw code. Moat would come from distribution into security-conscious enterprises, switching costs around configured providers and workflows, and the behavioral stickiness of being the place employees start every AI task. CAC could be ugly in enterprise, but LTV gets interesting if Thunderbolt becomes the governed wrapper around all model spend.

Winners:

Nexa AI: More demand compounds for local inference tooling because a polished client layer makes on-device models usable inside normal company workflows.

Glean: Better positioning emerges as customers want secure, enterprise-grade AI experiences connected to internal knowledge rather than consumer chat tabs.

Microsoft: More AI usage can flow into Azure-hosted and self-managed model deployments when enterprises want choice without abandoning existing identity and compliance stacks.

Losers:

TypingMind: Generic power-user chat interfaces lose edge because enterprise buyers increasingly care about governance, deployment control, and multi-device policy, not just prompt ergonomics.

Perplexity: Default destination status weakens for workplace research when organizations prefer AI access inside approved, auditable clients tied to internal systems.

OpenAI: Distribution power erodes at the margin when the interface layer gets abstracted and model providers become interchangeable line items behind a managed client.

tl;dr

Thunderbolt turns AI chat into deployable infrastructure instead of a vendor-owned tab. The clever bit is the local-first sync architecture plus provider abstraction, which makes model choice and data control feel operational, not theoretical. Teams dealing with compliance, procurement, or multi-model workflows should pay attention.

Stars: 1,348 | Language: TypeScript