The Push: April 17th, 2026

Governed agent upgrades, shared AI workspaces, and a game-studio-style crew for coordinated code and review

Evolver: Prompt Tweaks Need Governance

github.com/EvoMap/evolver | License: GPL-3.0

An agent breaks in the same way three times this week. Each fix lives in a chat transcript, a copied prompt, or somebody's memory of what worked last Tuesday. That is a ridiculous way to run software that is supposedly getting smarter over time. Evolver goes after that mess by treating agent improvement like a governed system instead of a string of vibesy prompt edits. The pitch sounds intense, maybe even a little overbuilt, but the underlying complaint is very real: AI teams keep shipping behavior changes with almost no durable record of why they happened.

The Drop: When Prompt Ops Hits the Wall

Chat-based AI tooling made iteration feel cheap, right until teams had to maintain the same agent for weeks or months. Then the cracks showed. A bad output appears, someone tweaks the system prompt, another person adds a rule, a third person pastes in a workaround from Slack, and suddenly nobody knows which change actually fixed anything.

Evolver exists because prompt engineering stops being creative play once an agent becomes part of a real workflow. Support bots, coding agents, internal research assistants, all of them generate logs, repeat failure modes, and accumulate weird behavioral patches. Yet the standard operating model is still half folklore, half copy-paste.

That gap is what this repo attacks. Instead of treating every agent update as a fresh conversation, Evolver treats changes as governed evolutionary steps. The project introduces named assets like Genes and Capsules, reusable units of behavioral change, plus an auditable EvolutionEvent, a recorded trace of what changed and why. The pain point is not that AI agents cannot improve. The pain point is that improvement becomes impossible to trust once the history gets messy.

The Stack: Node as the Control Plane

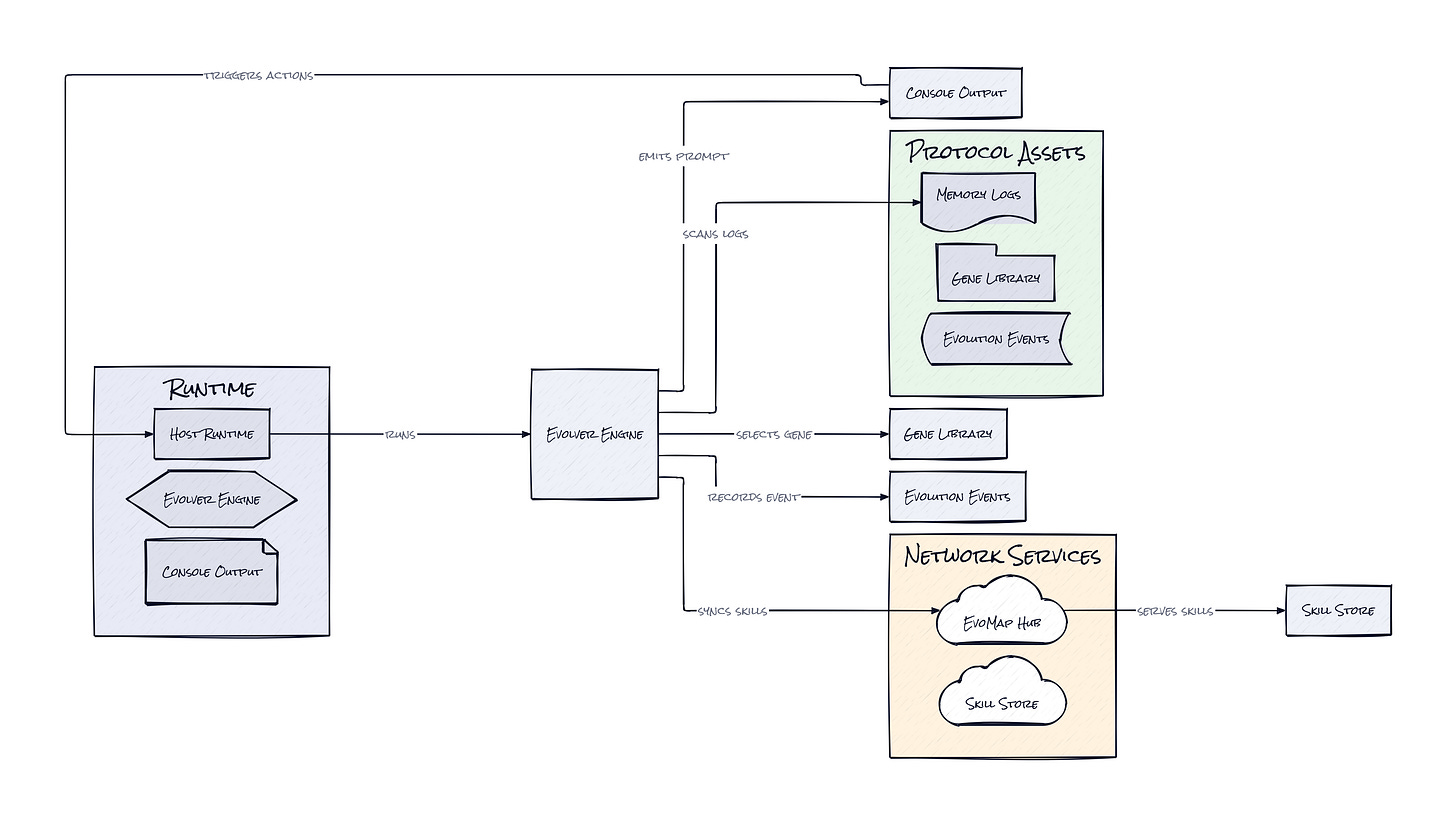

Under the hood, Evolver is a JavaScript and Node-based orchestration layer, not a model framework. Core pieces handle log analysis, asset selection, Git-backed rollback, local state awareness, and optional network sync with the broader EvoMap hub.

Several adapters connect the engine to tools like Claude Code, Cursor, and Codex, which makes the repo feel less like a chatbot toy and more like control software for agent behavior.

The Sauce: Evolution as a Protocol, Not a Prompt

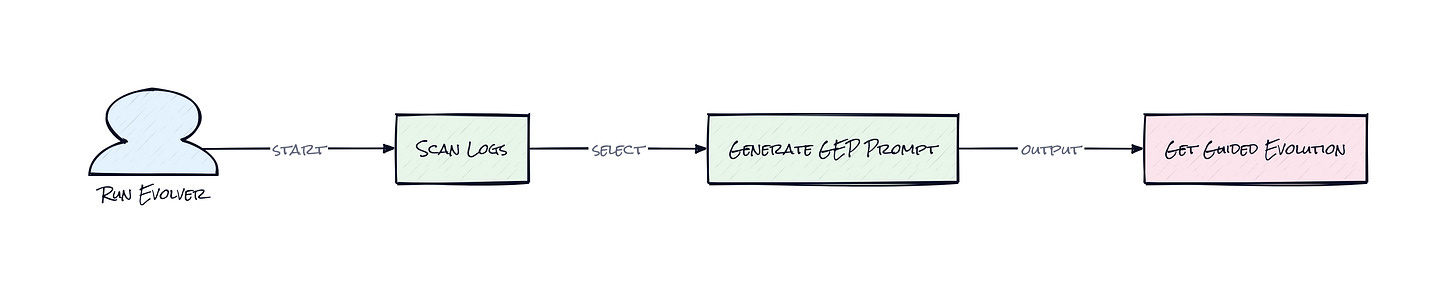

Protocol design is the center of gravity here. Evolver's defining move is the GEP Protocol, a structured system for turning runtime signals into constrained next-step instructions, instead of letting an agent freestyle its own self-improvement. That may sound bureaucratic, but honestly, the interesting part is exactly that constraint.

Here's the architecture in plain terms. Evolver watches an agent's memory and logs, pulls out recurring signals such as error patterns or stagnation, then matches those signals against a library of curated evolution assets. Those assets are not random snippets. Genes encode narrower behavioral adaptations, while Capsules package more composite changes. The engine then emits a protocol-bound prompt that tells the host agent what kind of mutation is allowed, under what strategy, and with what review or rollback posture.

That matters because the repo separates decision support from execution. Evolver does not directly rewrite code or run arbitrary commands. It generates bounded instructions, records an EvolutionEvent, and leaves the host runtime or a human reviewer to decide whether the change should proceed. This creates an audit trail and sharply reduces blast radius.

There is also a subtle second-order benefit. Once changes are represented as reusable assets instead of one-off fixes, teams can build a shared library of agent behaviors, publish them, rank them, and potentially trade them across a network. That starts to look less like prompt engineering and more like package management for machine behavior. That is a much bigger idea.

The Move: Turn Agent Behavior Into an Asset

Plenty of teams already have agents in production, but very few have a disciplined way to improve them. Evolver gives those teams a process layer. Run it against a flaky internal assistant, capture recurring failures in logs, and let the engine propose bounded repair or hardening steps in review mode. That alone creates a cleaner feedback loop than chasing fixes through chat history.

More strategically, Evolver can become the memory and governance layer for any company betting on long-lived AI workers. Product teams could use it to compare stability-focused versus innovation-focused strategies over time. Ops teams could use the audit trail for compliance-heavy environments where every behavioral change needs a reason. Platform teams could distill recurring fixes into reusable skills and distribute them across multiple agents.

The offline-first design also matters. Core behavior works locally, while the optional EvoMap network adds sharing, worker coordination, and leaderboards. That means a startup can begin with a single internal agent and later graduate into a broader skill marketplace model without swapping systems. That path from local control to networked intelligence is where the business upside starts to compound.

The Aura: Software That Learns With Receipts

Trust changes when AI behavior stops feeling mysterious. People are surprisingly tolerant of systems that adapt, as long as those adaptations are legible, reviewable, and reversible. The psychological unlock here is not raw autonomy. It is accountable adaptation.

Evolver points toward a world where teams expect agents to improve continuously, but also expect those improvements to come with provenance. Which signal triggered the change? Which policy bounded it? Which prior fix did it reuse? Those questions sound operational, yet they shape human confidence. Once AI behavior can be inspected like product decisions, agents stop feeling like temperamental black boxes and start feeling like managed infrastructure.

The Play: Governance Layer or Niche Protocol Bet

From a VC lens, Evolver sits between a better mousetrap and a 0-to-1 category wedge. The immediate market is prompt ops, agent reliability, and AI governance, all large and getting larger as enterprise spend shifts from experimentation to production. The broader TAM is any company running persistent AI workers, which pulls in support, software, security, research, and workflow automation. Nearly 4,000 stars in a short window suggests early curiosity, and the repo already shows PMF signals around a clear pain point: teams need agent improvement to be repeatable, auditable, and shareable.

The moat is not classic data network effects yet. It is closer to workflow lock-in plus asset accumulation. Once a company encodes successful fixes as Genes, Capsules, and event histories, switching means losing institutional memory. If EvoMap's network actually becomes a marketplace for validated behaviors, that could push this from tooling into protocol territory, with stronger defensibility and lower CAC through community distribution. The open question is whether teams want one shared standard, or just lighter-weight internal controls.

Winners:

Atlassian: Agent governance slots neatly into Jira and Opsgenie-style workflows, which raises LTV by making AI changes part of existing operational process.

Duolingo: Persistent tutoring agents get safer to personalize when every behavioral mutation is logged and reviewable, which compounds learning quality and trust.

Cloudflare: Edge-hosted AI workers become easier to manage at scale when adaptation can be constrained, audited, and rolled back close to deployment.

Losers:

Moveworks: Black-box enterprise assistant behavior gets harder to defend when customers start expecting transparent mutation history and portable improvement assets.

Zapier: Lightweight automation loses some edge if agent workflows demand governance, review, and reusable behavioral assets rather than quick prompt glue.

Khan Academy: Proprietary tutoring improvements look less differentiated if open ecosystems start sharing validated educational agent behaviors across networks.

tl;dr

Evolver turns agent improvement into a governed protocol instead of a pile of prompt edits. What stands out is the asset-based architecture, where reusable behavior changes and audit logs become part of the system itself. Teams running long-lived AI assistants, especially in ops-heavy or compliance-sensitive contexts, should pay attention.

Stars: 3,965 | Language: JavaScript