The Push: April 13th, 2026

Senior developer logic for bloat-free code, assistants with permanent memories, and market trends read like a sentence

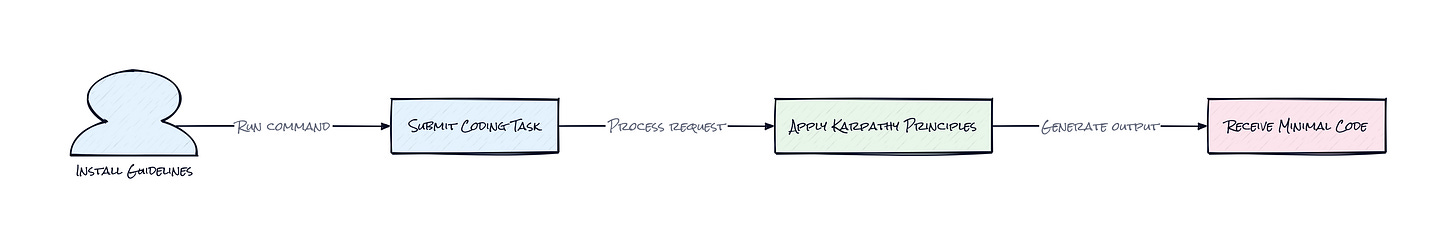

Andrej Karpathy Skills: Stop Letting AI Overengineer Your Prototypes

github.com/forrestchang/andrej-karpathy-skills

You ask a coding agent to fix a minor CSS alignment issue on your landing page and come back ten minutes later to find it has refactored your entire navigation component, introduced a new state management library, and somehow broke the production build. This specific frustration is the hallmark of the current LLM era: models are hyper-competent but lack the basic social intelligence to know when to stop. They often behave like an over-caffeinated junior engineer who thinks every small task is an opportunity to rewrite the core architecture. The friction isn't a lack of knowledge, but a lack of restraint and clarity regarding the boundaries of the existing codebase.

The Drop: Observations From the Frontier

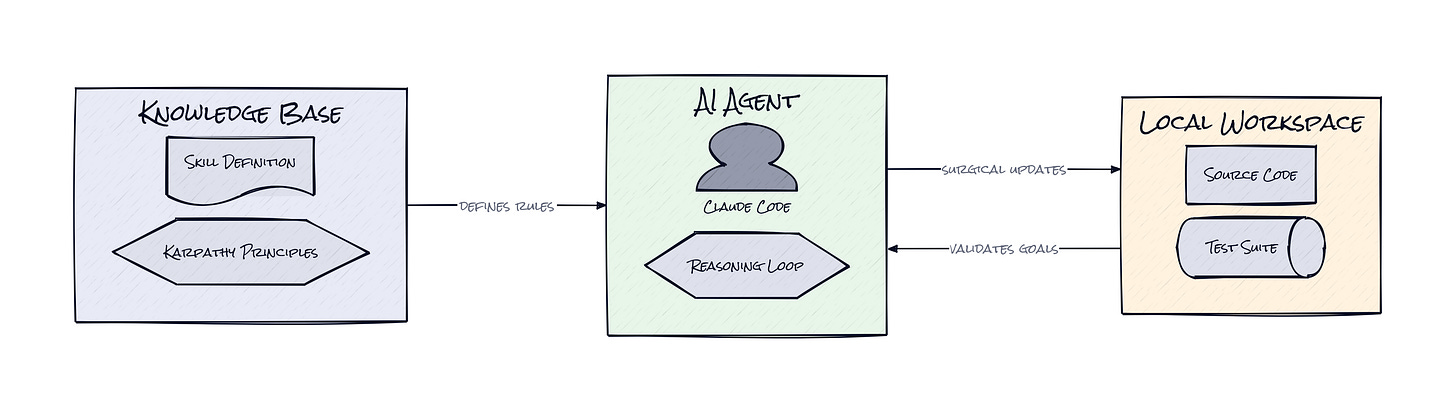

Andrej Karpathy recently sparked a massive conversation in the engineering world by highlighting how current LLMs frequently make silent, incorrect assumptions on behalf of the user. These models tend to overcomplicate simple APIs, ignore dead code, and accidentally delete comments that they simply do not understand. The project forrestchang/andrej-karpathy-skills is a direct response to these specific behavioral quirks. It distills those high-level observations into a concrete set of instructions designed to steer Claude, specifically the Claude Code agent, toward more responsible and surgical behavior.

The core struggle for any non-developer trying to use AI to build software is the "drift" that happens over a long conversation. As the context window fills up, the model loses the thread of the original simplicity you requested and starts hallucinating complex abstractions to solve problems that don't exist yet. This repository provides a way to lock the model into a mindset of simplicity and verification. It attempts to solve the "black box" nature of AI coding by forcing the model to show its work and seek permission before making sweeping changes. By implementing these skills, you transform the AI from an unpredictable collaborator into a disciplined tool that respects the constraints of your specific project.

The Stack: Prompt Engineering as Infrastructure

The technical foundation here is remarkably lean, consisting primarily of a Contextual Constraint Layer delivered via a `CLAUDE.md` file or a dedicated plugin for the Claude Code interface. This configuration relies on the system's ability to ingest markdown-based instructions that govern its reasoning process across entire sessions. It leverages the native tool-calling capabilities of modern agents without requiring any external databases or complex server-side logic beyond the LLM itself.

The Sauce: Engineering Metacognitive Boundaries

The brilliance of this project lies in how it implements Metacognitive Steering to change the internal logic of the AI before a single line of code is written. Instead of just giving the model better instructions for Python or JavaScript, these guidelines change how the model "thinks" about the task itself. The project forces a transition from imperative instructions, where you tell the AI what to do, to Declarative Intent, where you define what a successful outcome looks like and let the model figure out the safest path to get there. This architectural choice addresses the core failure of most AI agents: the tendency to rush into execution without a plan.

By mandating a "Think Before Coding" phase, the instructions require the model to explicitly state its assumptions and present multiple interpretations of a prompt before it touches the files. This is clever because it uses the model's own reasoning tokens to debug the user's intent. If there is ambiguity in your request, the model is forced to stop and ask for clarification rather than making a guess that results in a massive, unnecessary pull request. It effectively builds a feedback loop into the inference process itself, making the AI act as its own project manager.

Another sophisticated design decision is the focus on Surgical Patching over total file rewrites. Most LLMs prefer to rewrite an entire function or file because it is easier for them to maintain coherence that way, but this often leads to "drive-by refactoring" where orthogonal code is changed for no reason. These guidelines provide a strict framework for touching only the lines that are strictly necessary for the task. This preserves the "legacy" knowledge of your codebase, such as specific comments or weird edge-case handling, which the AI might otherwise perceive as messiness to be cleaned up. It treats your existing code as a sacred constraint rather than a rough draft that needs an AI makeover.

Finally, the project emphasizes a "Goal-Driven Execution" loop that requires the AI to write a test or a verification step before it considers a task finished. This is the ultimate guardrail. It turns the AI into a self-correcting system that won't report success until it has objective proof that the new code works as intended. This shift from "trust me, I wrote it" to "here is the passing test" is the difference between a prototype that breaks tomorrow and a stable piece of software.

The Move: Hardening Your Development Workflow

Adopting this repository is less about a specific coding task and more about establishing a strategic standard for how your team interacts with AI agents. You can treat the `CLAUDE.md` file as a foundational piece of project infrastructure, similar to a configuration file or a linter. By dropping this into the root of your repository, you ensure that anyone using Claude to contribute to the project is bound by the same rules of simplicity and surgical precision. This is a massive advantage for product managers or solo founders who are managing external contractors or using AI to move fast. It acts as an automated quality control layer that catches overengineering before it enters your main branch.

Beyond the file itself, the real move is using the Claude Code plugin marketplace to install these skills globally. This allows you to carry Karpathy's high-performance coding philosophy into every session without having to manually copy-paste rules. If you are building a product where speed is everything, but technical debt is a constant threat, these guidelines serve as a low-overhead "CTO in a box" that keeps the AI's output clean and focused. It allows you to focus on high-level feature requirements while the AI remains focused on the actually important task of not breaking things.

The Aura: Guarding the Human Intent

This technology represents a shift in the power dynamic between humans and generative models. We are moving away from the era of "AI as a replacement for labor" and into "AI as a highly constrained executor of intent." The psychological shift here is profound: you stop being a manager who micromanages every line and start being an architect who defines the boundaries of the sandbox. It acknowledges that the primary risk of AI isn't that it is too dumb, but that it is too eager to show off its intelligence at the expense of clarity and stability.

There is a certain humility in these guidelines that mirrors the philosophy of senior engineering. Truly great developers know that the best code is often the code you didn't write. By enforcing this fundamental principle on an AI, we are essentially teaching the model to value restraint. This represents a human-centric approach to automation where the goal is to amplify the user's vision while suppressing the model's tendency toward chaotic creativity. It turns the interaction from a stressful "cleanup" job into a collaborative refinement process where the AI finally respects the silence between the lines of code.

The Play: The Contextual Optimization Market

The venture capital thesis for a project like this is centered on the shift from model-centric to context-centric value. We are seeing a 0-to-1 transition in how developers interact with LLMs, moving from generic chat windows to deeply integrated, agentic environments. In this new market, the moat isn't the underlying model, since Claude, GPT, and Llama are increasingly commoditized, but the "contextual steering" that makes those models reliable in a production setting. This repository is a clear signal of product-market fit for "reliability layers" in the AI stack. The high star velocity and community engagement show a massive, unmet demand for tools that tame the erratic behavior of coding agents.

The Total Addressable Market for this kind of optimization is essentially the entire software development industry. As every company becomes an "AI-augmented" company, the winners will be the platforms that can offer the highest level of trust and the lowest level of hallucination-driven technical debt. This project is a better mousetrap because it doesn't require a new platform; it simply improves the existing ones. The underlying behavior change is highly sticky because once a user experiences the efficiency of surgical, goal-driven AI edits, going back to "generic" AI chat feels like taking a step backward into chaos. This is a play on the "picks and shovels" of the AI era, where the most valuable tools are those that make the existing high-powered models actually usable for serious work.

Winners:

Replit: Replit gains a massive advantage by integrating these types of metacognitive constraints directly into its AI-powered IDE. This move reduces the frequency of broken environments for beginner users and increases the perceived intelligence of their agentic features.

Cursor: Cursor wins by standardizing these rules as a "default mode" for professional developers who need to maintain large-scale codebases. This compounds their lead in the specialized coding tool market by offering a level of reliability that generic web-based chats cannot match.

Vercel: Vercel benefits from a higher volume of successful deployments as AI agents become more disciplined and less likely to push code that fails automated checks. This increases their platform stickiness as the "final mile" for verified, AI-generated software.

Losers:

Atlassian: Atlassian faces a decline in the relevance of traditional project management tools as agents become capable of self-managing their tasks and documentation. This erodes the need for manual tracking of technical debt when the AI is programmed to avoid creating it in the first place.

Toptal: Toptal loses its competitive edge as the barrier between "junior" and "senior" level output blurs through the use of sophisticated AI steering guidelines. This makes high-end human freelance labor harder to justify for routine development tasks that these disciplined agents can now handle.

GitLab: GitLab struggles to keep pace if its internal AI features remain stuck in a "suggestion" model rather than a "surgical agent" model. This makes adaptation hard as users migrate toward tools that offer more granular control over the AI's reasoning and execution logic.

tl;dr

Andrej Karpathy Skills is a specialized configuration file that forces Claude to stop overengineering code and start acting like a senior developer. It uses clever reasoning constraints to ensure the AI asks questions before making assumptions and only touches the code it is supposed to. This is for anyone tired of AI-generated bloat.

Stars: 23,209